Deploy a Image Classification Model

This document will help you on how to deploy a custom image classification (huggingface: microsoft/beit-base-patch16-224-pt22k-ft22k) model.

Before we start the deployment process, please follow the below formats for every file:

The model must contain the following pre-requisite files:

- requirement.txt - Text file containing all your required packages along with the version.

pandas==x.x.x

transformers==x.x.x

Pillow==x.x.x

torch==x.x.x

- schema.py - This file will contain the type of input that will be excepted by your endpoint API. The template for the file is shown below:

from pydantic import BaseModel

# sample Predict Schema

# Make sure key is data and your data can be of anytype

class PredictSchema(BaseModel):

data: str

- launch.py - This is the most important file, containing loadmodel, preprocessing and prediction functions. The template for the file is shown below:

Note: loadmodel and prediction functions are compulsory. preprocessing function is optional based on the data you are passing to the system. By default false is returned from preprocessing function.

loadmodel takes logger object, please do not define your own logging object. preprocessing takes the data and logger object, prediction takes preprocessed data, model and logger object.

from PIL import Image

import requests

import json

from transformers import BeitFeatureExtractor, BeitForImageClassification

def loadmodel(logger):

"""Get model from cloud object storage."""

logger.info("getting model")

model = BeitForImageClassification.from_pretrained(

"microsoft/beit-base-patch16-224-pt22k-ft22k"

)

return model

def preprocessing(url: str, logger):

""" Applies preprocessing techniques to the raw data"""

def loaddata(url):

# url = 'http://images.cocodataset.org/val2017/000000039769.jpg'

image = Image.open(requests.get(url, stream=True).raw)

return image

# Apply all preprocessing techniques inside this to your input data, if required and return data

# else if no preprocesssing is required by data then just return False as below

# return False

image = loaddata(url)

logger.info("loaded model")

feature_extractor = BeitFeatureExtractor.from_pretrained(

"microsoft/beit-base-patch16-224-pt22k-ft22k"

)

inputs = feature_extractor(images=image, return_tensors="pt")

return inputs

def predict(features, model, logger):

"""Predicts the results for the given inputs"""

outputs = model(**features)

logits = outputs.logits

# model predicts one of the 21, 841 ImageNet-22k classes

predicted_class_idx = logits.argmax(-1).item()

result_class = model.config.id2label[predicted_class_idx]

predicted_result = {"Predicted class": result_class}

pred_results = json.dumps(predicted_result)

return pred_results

- labels_file.json: - This file is required for feedback or performance metrics calculations of binary or multi-class classification problems.

Note: Please note that these classes should be in encoded format (eg. ["cat", "dog] will be [0, 1] or [1, 2]) for both binay and multi-class problems.

# labels_file.json for multi-class classification, you can define your own classes here

{"labels": [1, 2, 3, 4, 5, 6]}

# labels_file.json for binary classification, you can define your own classes here

{"labels": [1, 2]} or [0, 1]

Once you prepared the required files you can proceed with the deployment.

Note: Before Deployment the prepared required files should be inside the GitHub Repository.

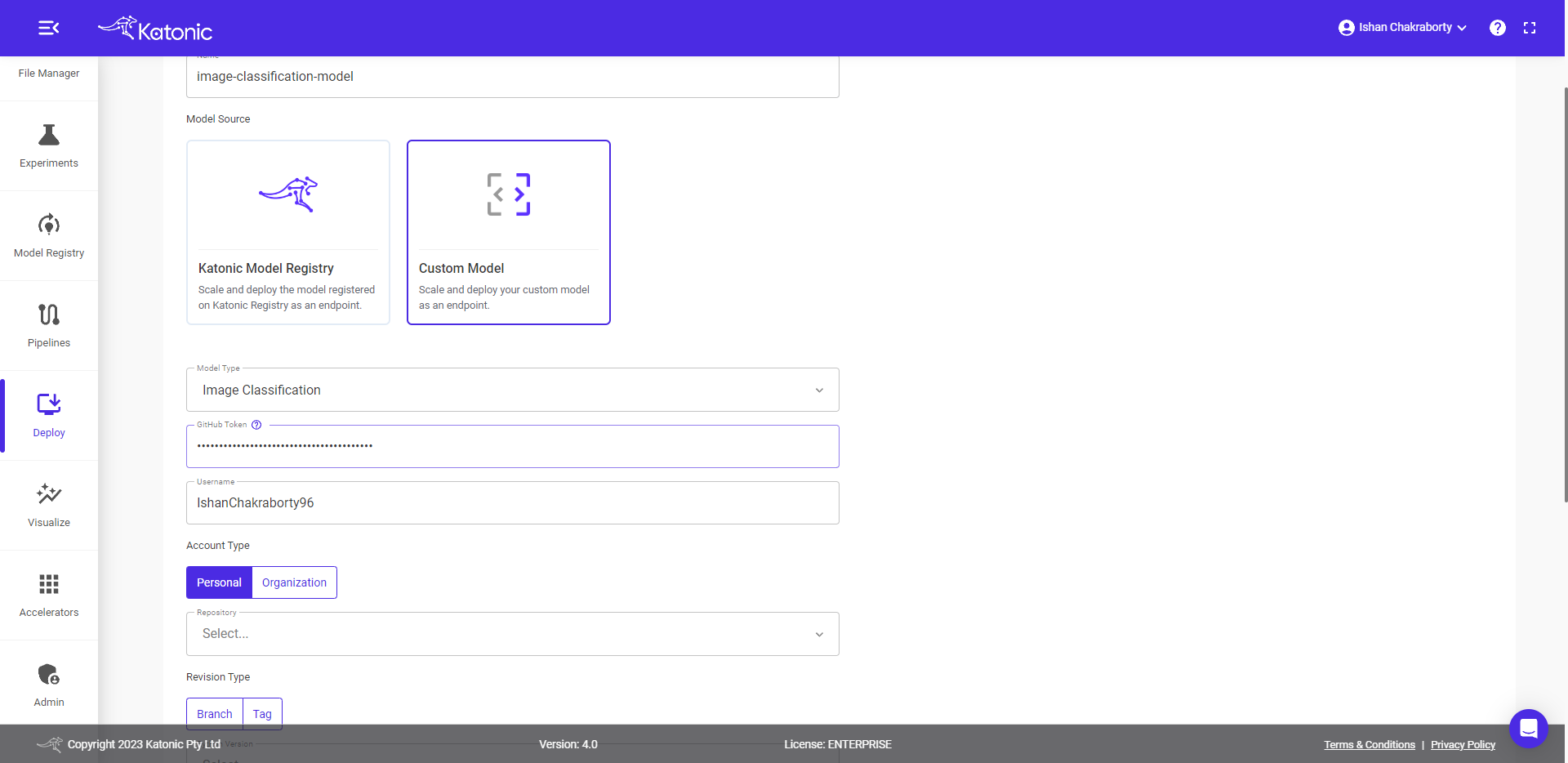

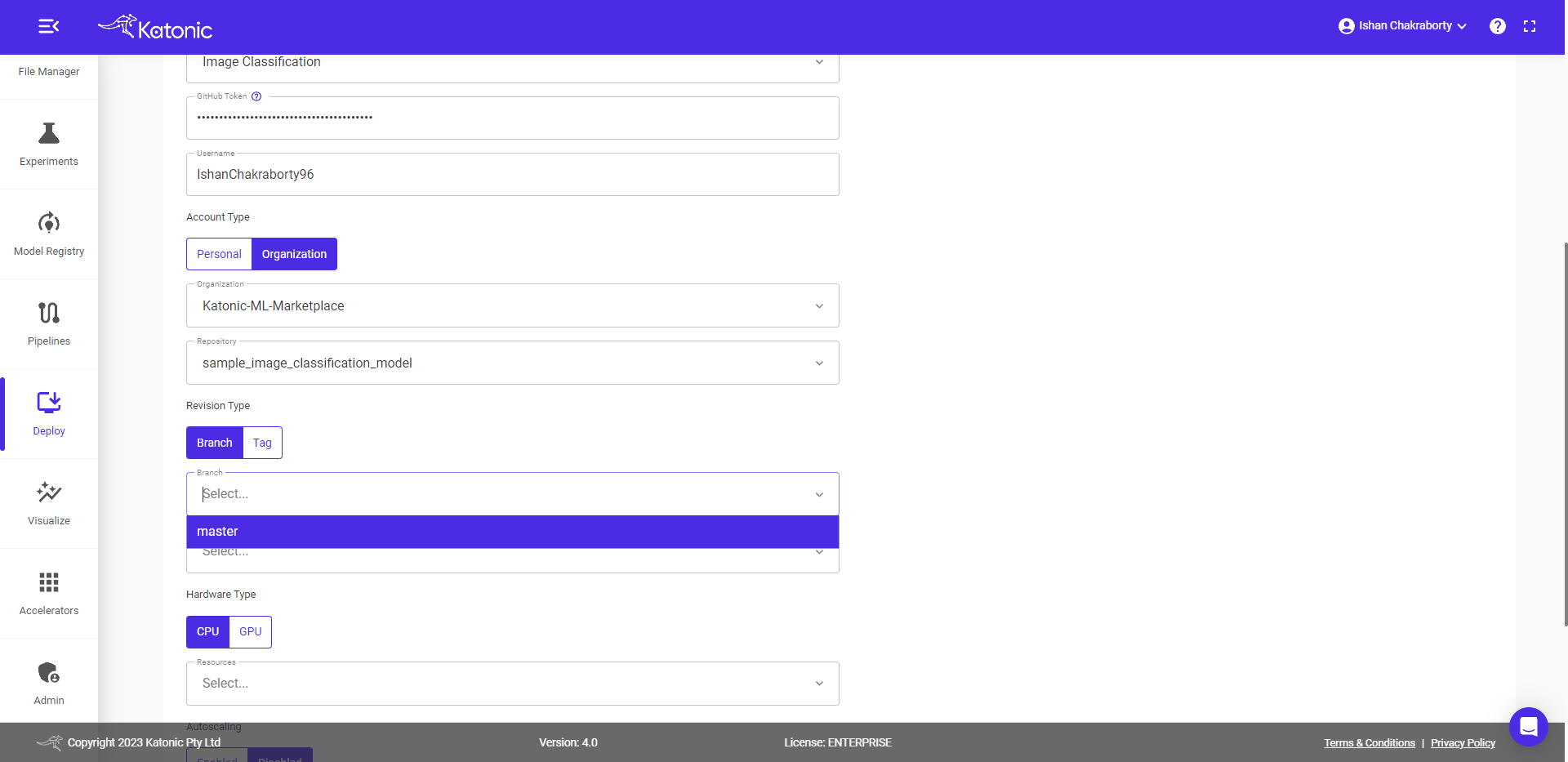

How to deploy your image classification model using Custom Deployment

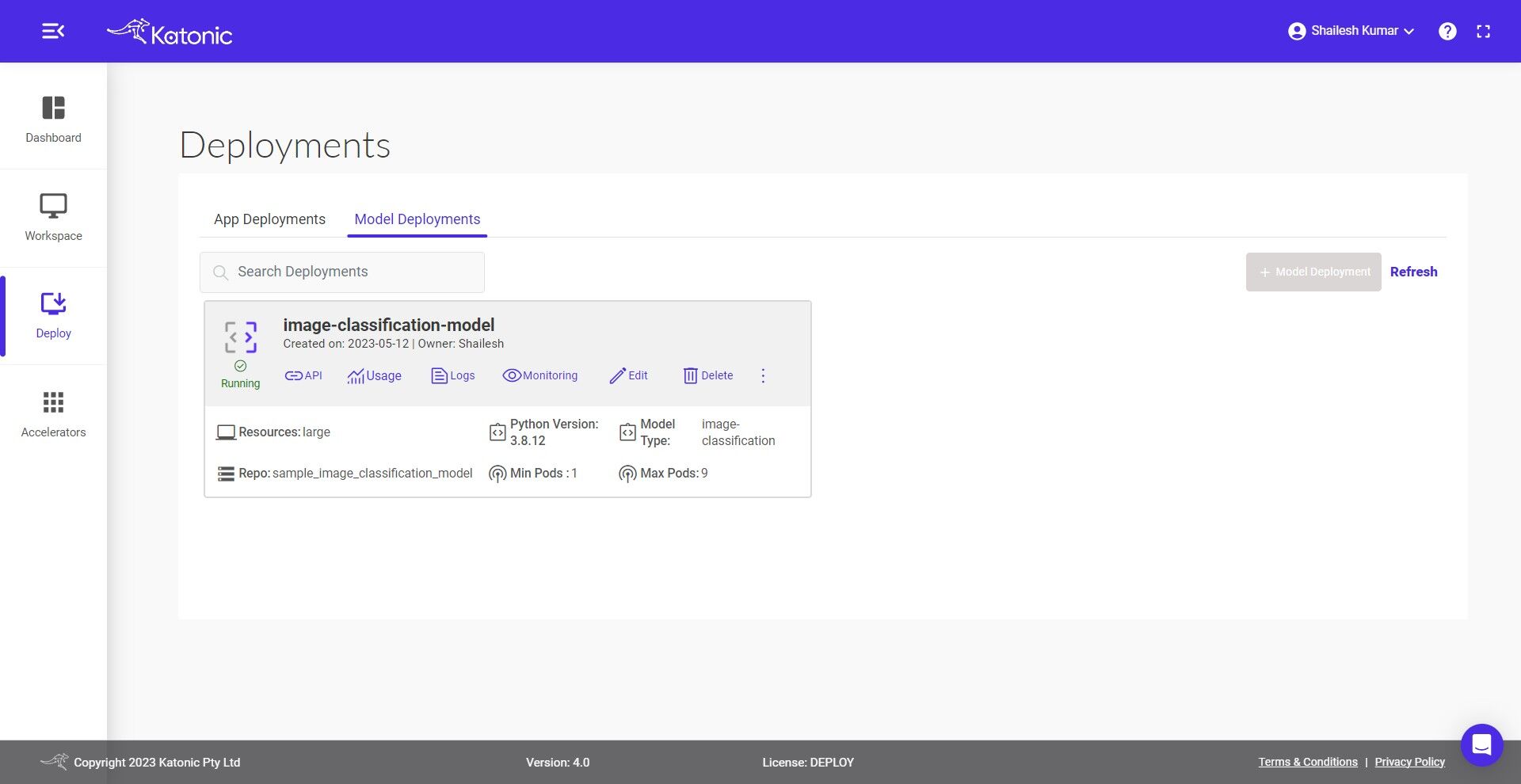

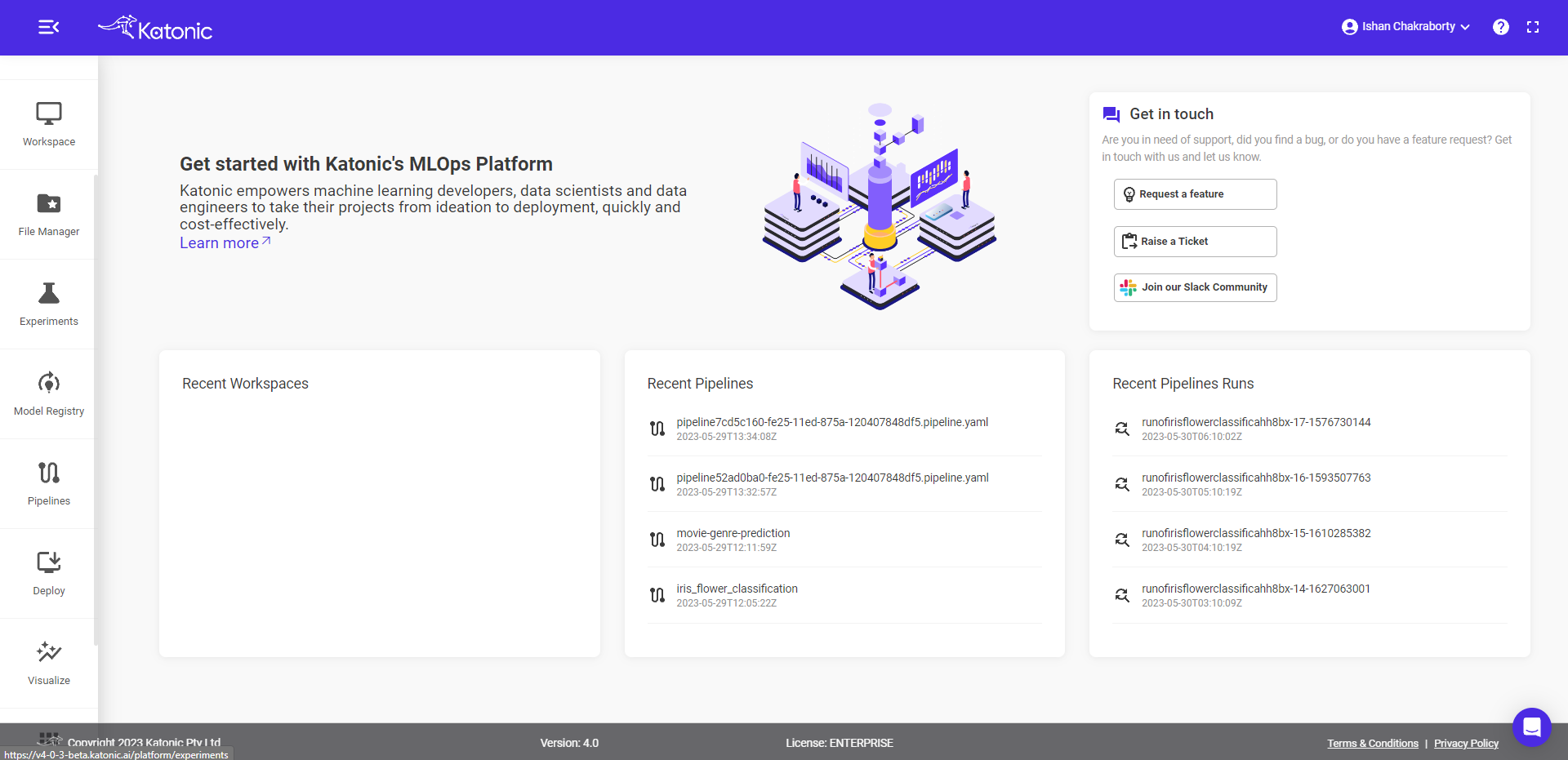

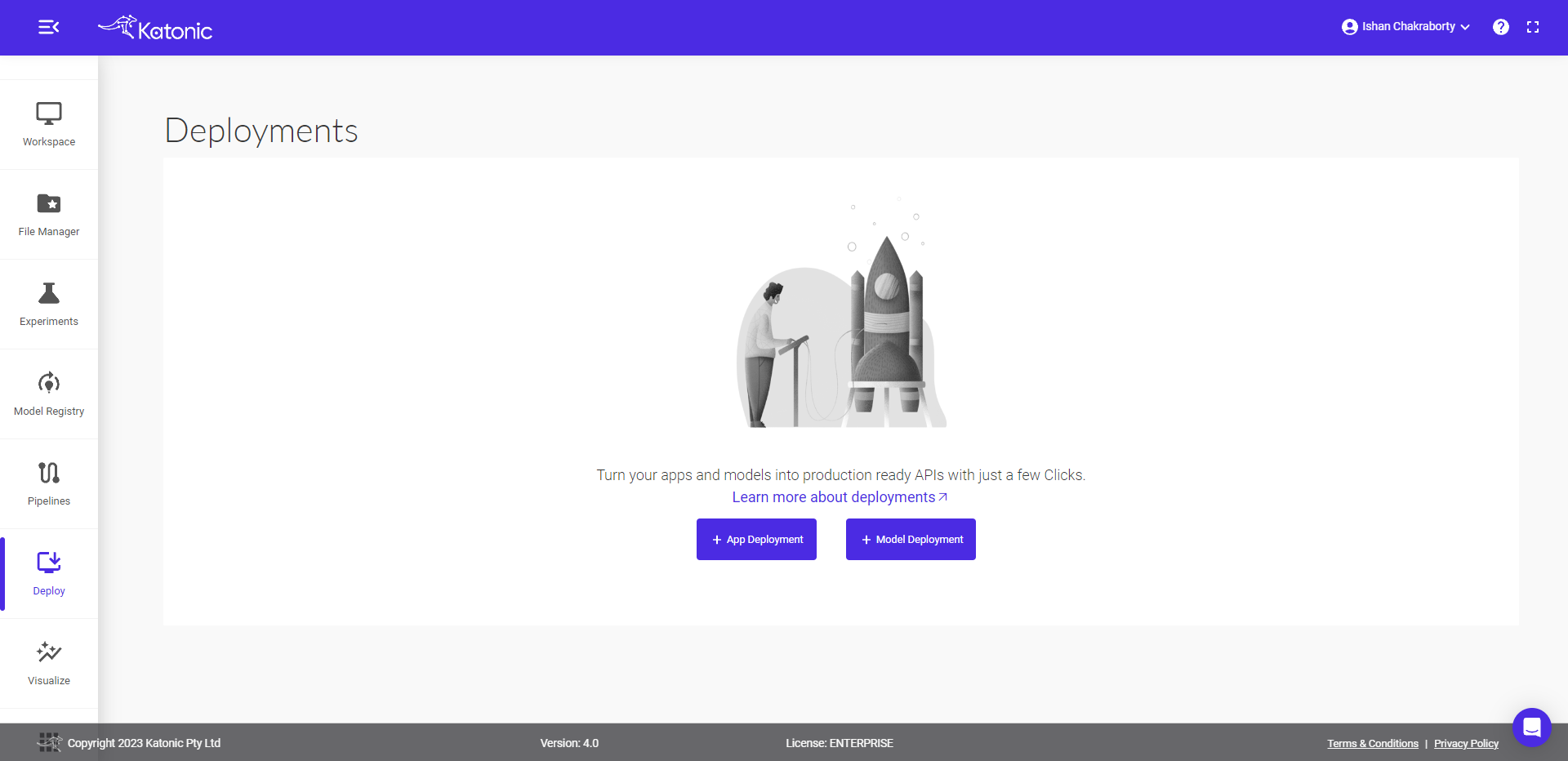

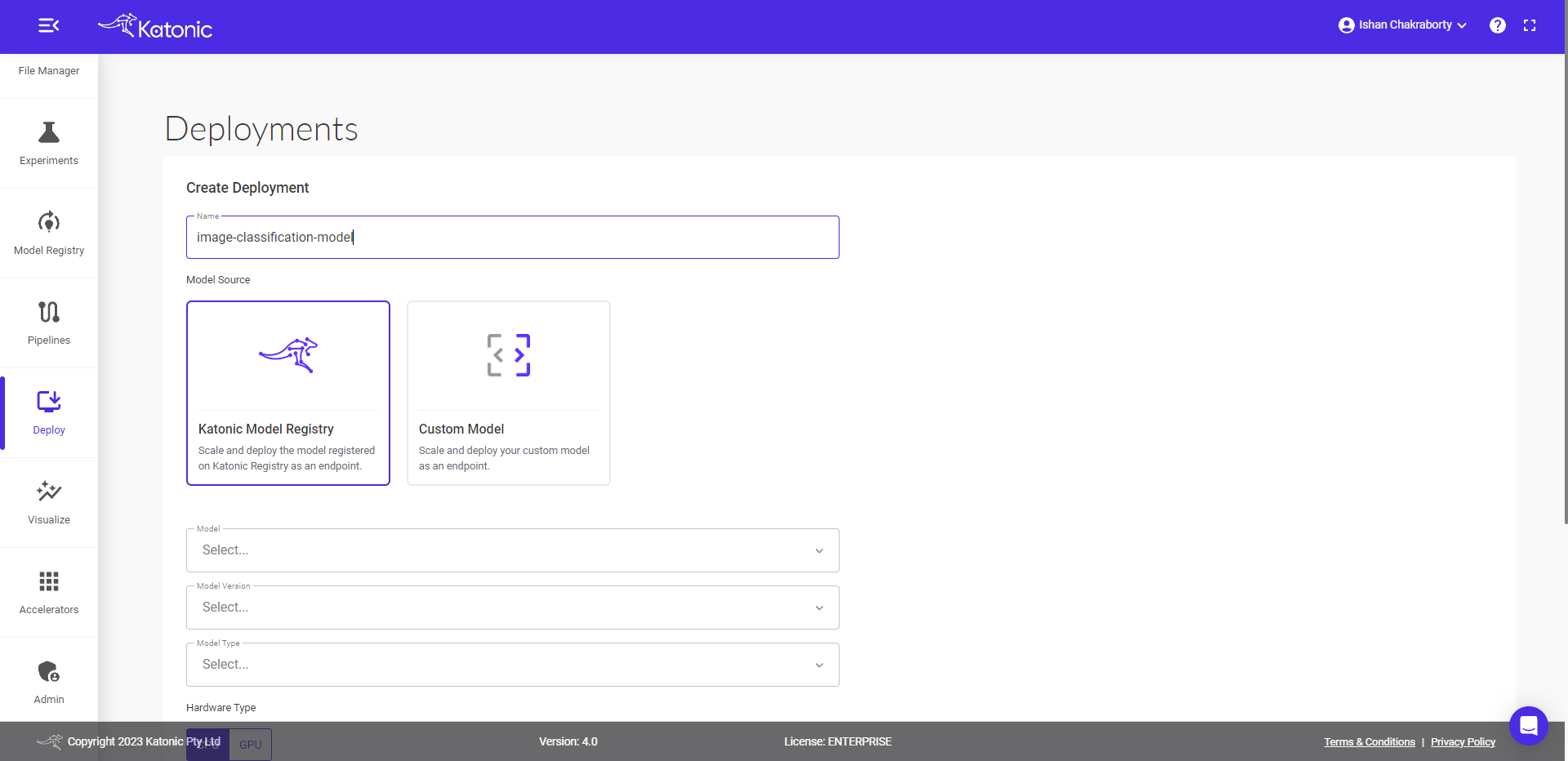

- Navigate to Deploy section from sidebar on the platform.

- Select the Model Deployment option from the bottom.

- Fill the model details in the dialog box.

- Give a Name to your deployment, for example regression-model or audio-to-speech and proceed to the next field.

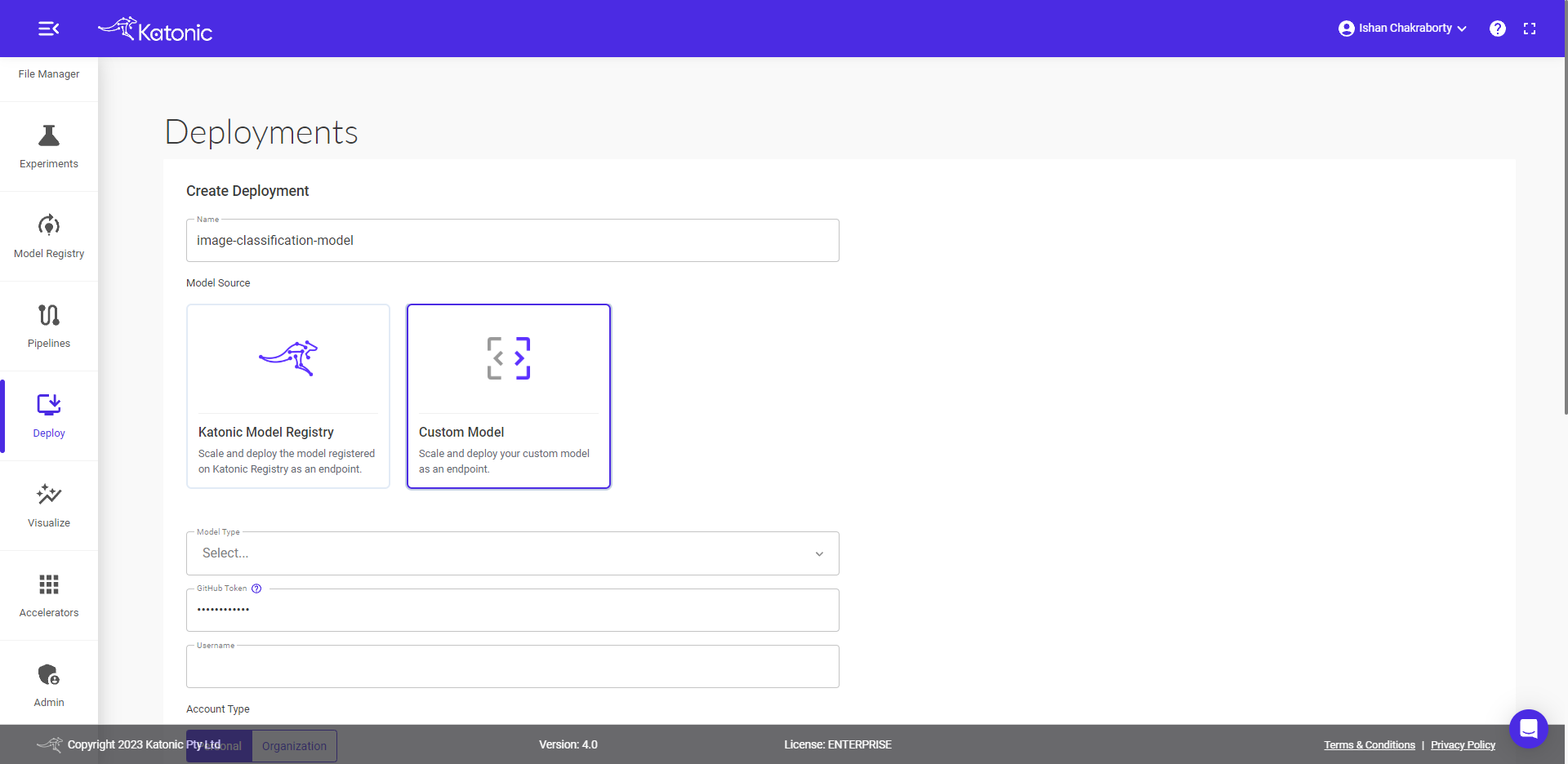

- Select Custom Model under Model Source option.

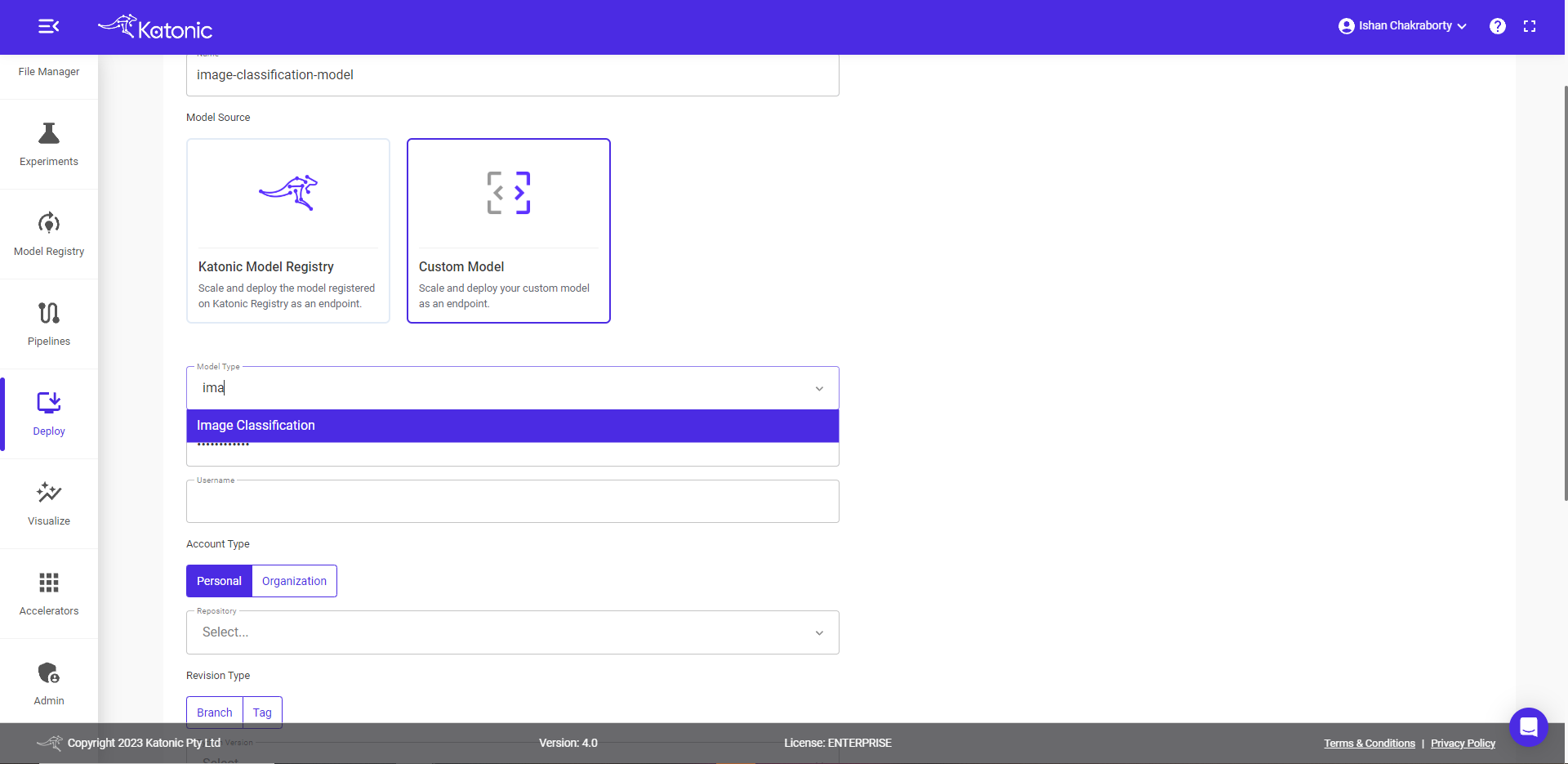

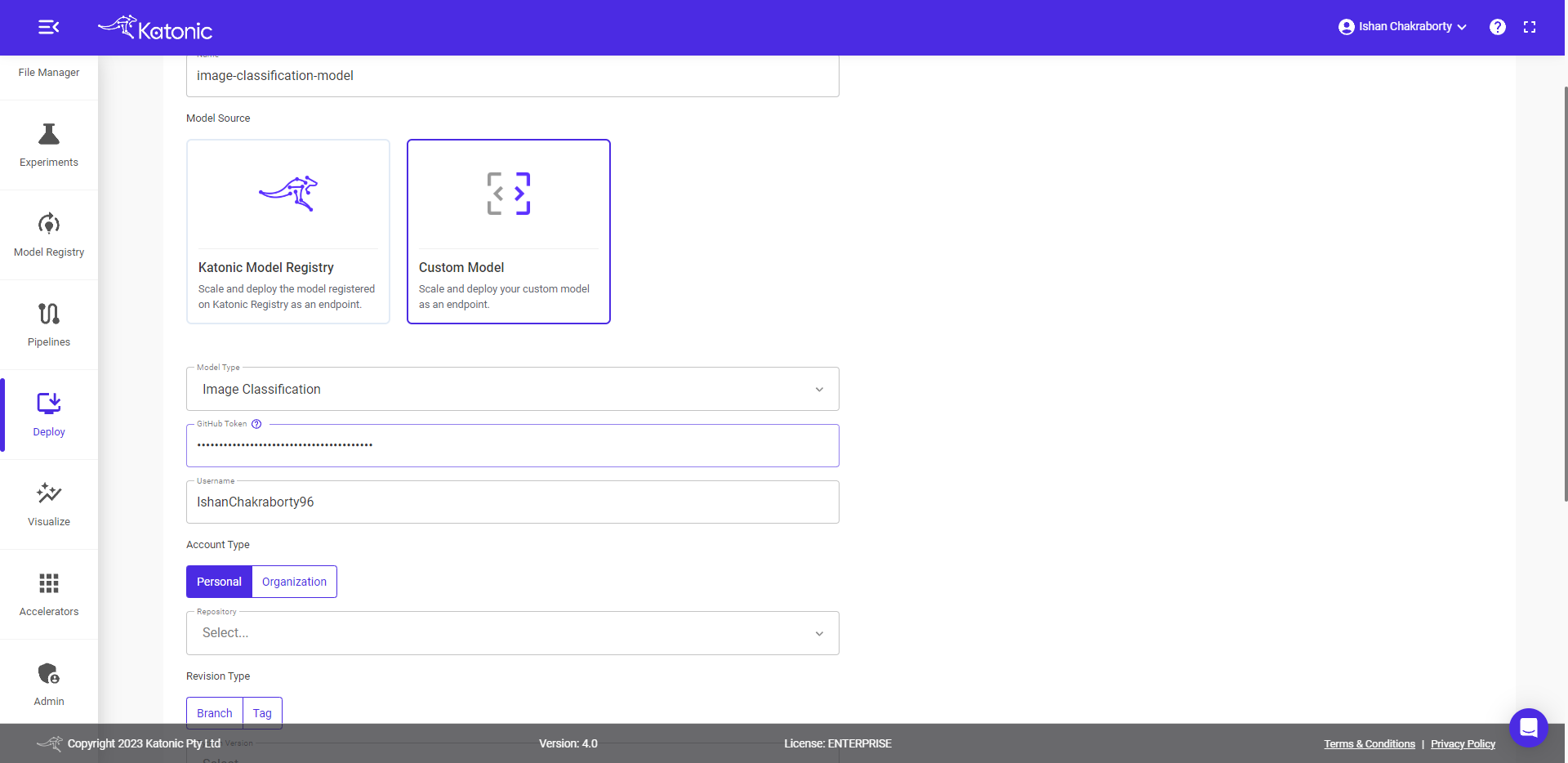

- Select Model type for eg., in this case it is Image Classification..

- Provide the GitHub token.

Your username will appear once the token is passed.

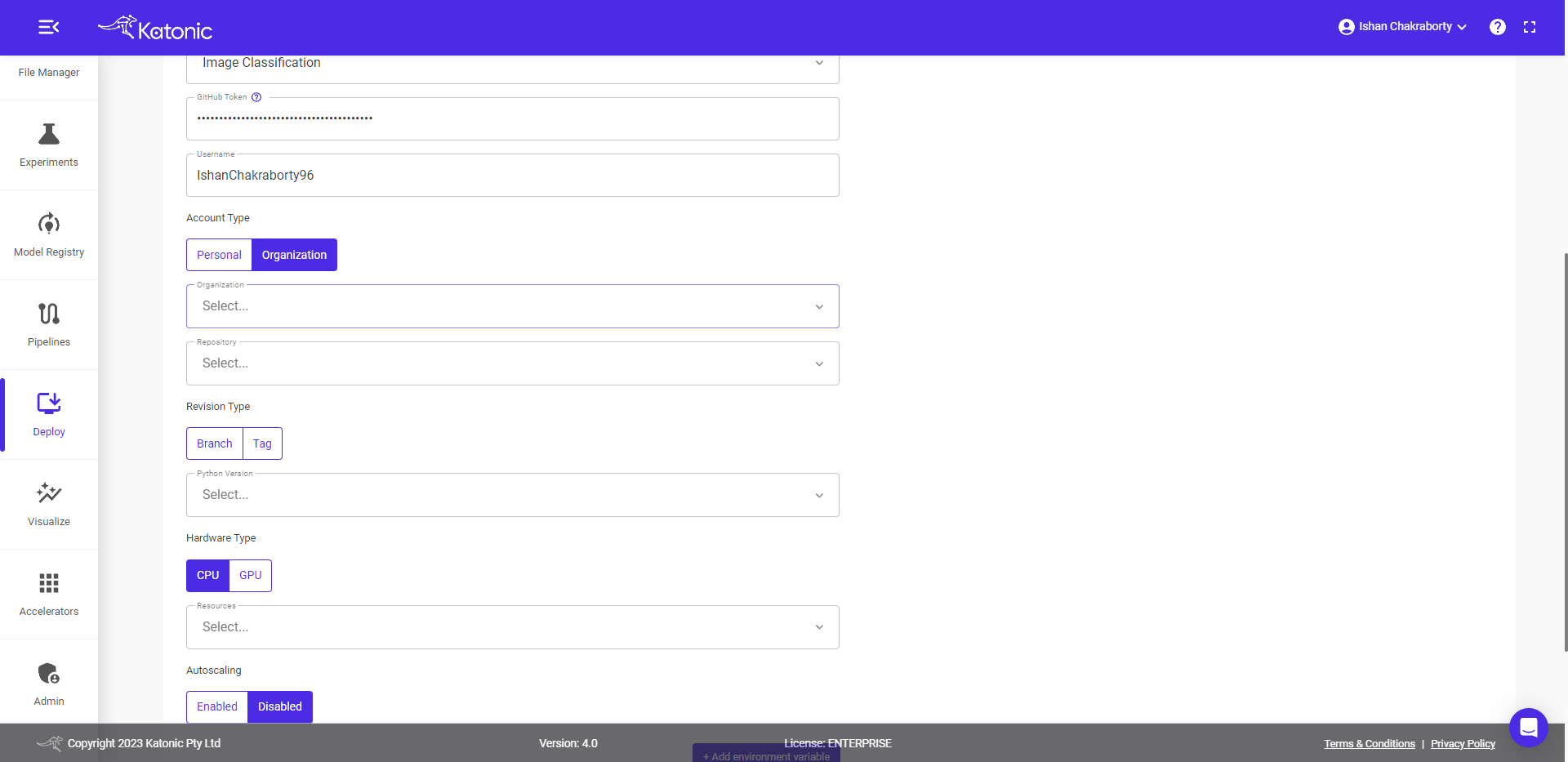

Select the Account type.

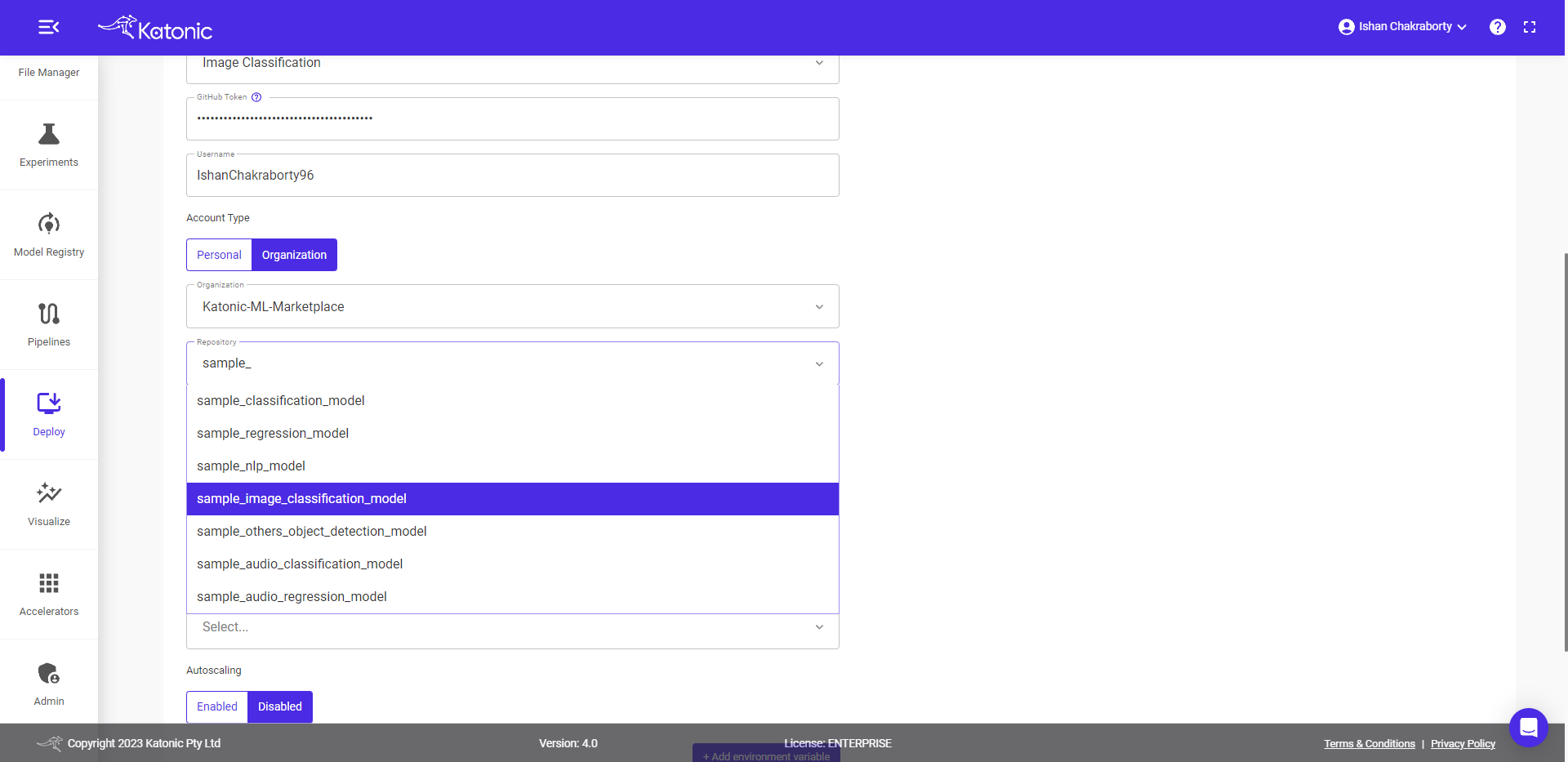

- Select Organization Name, if account type is

Organization.

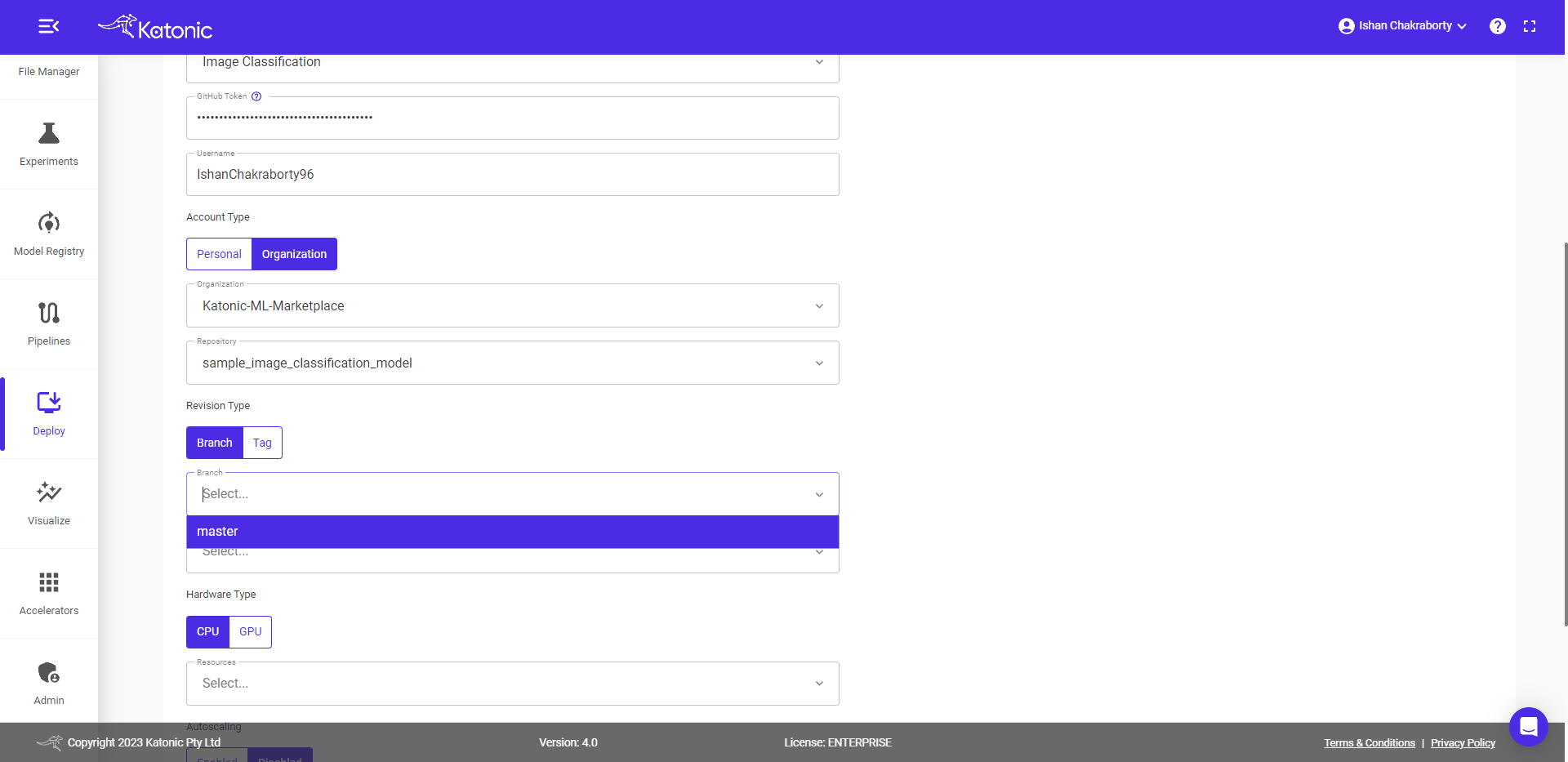

- Select the Repository.

- Select the Revision Type.

- Select the Branch Name, if revision type is

Branch.

Note: your GitHub repository must contain requirements.txt, schema.py and launch.py files whose templates are discussed above.

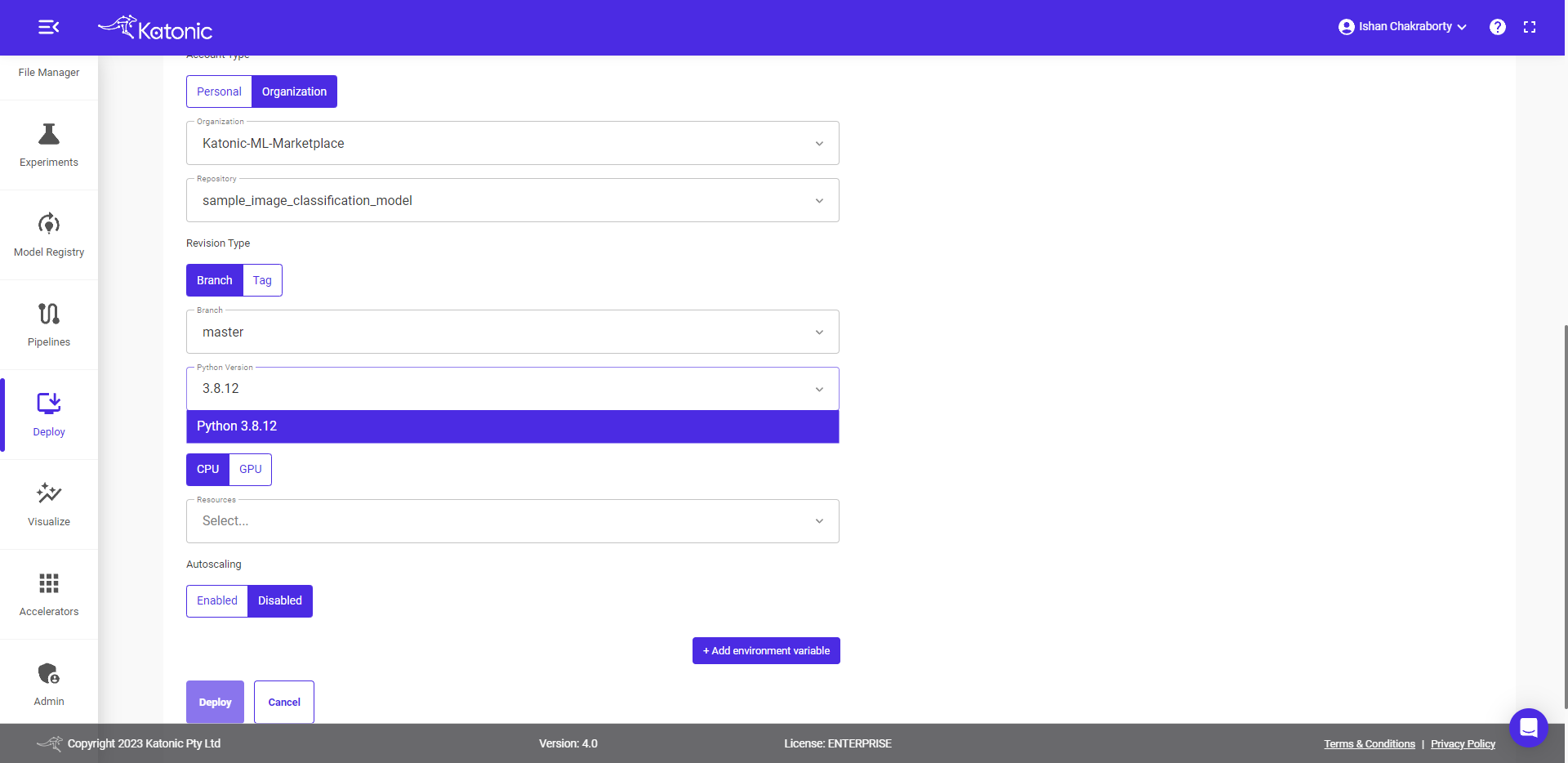

- Select Python version.

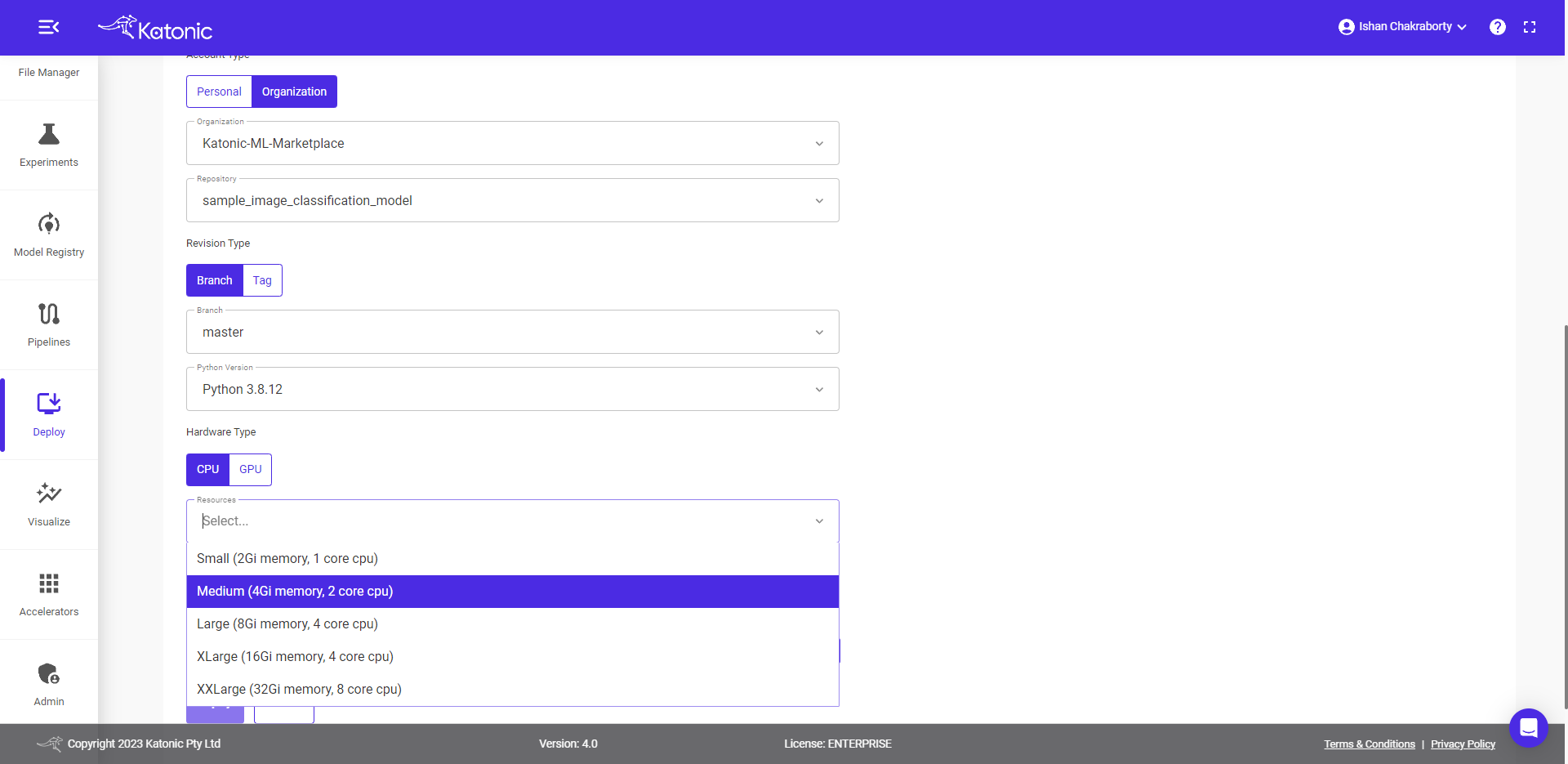

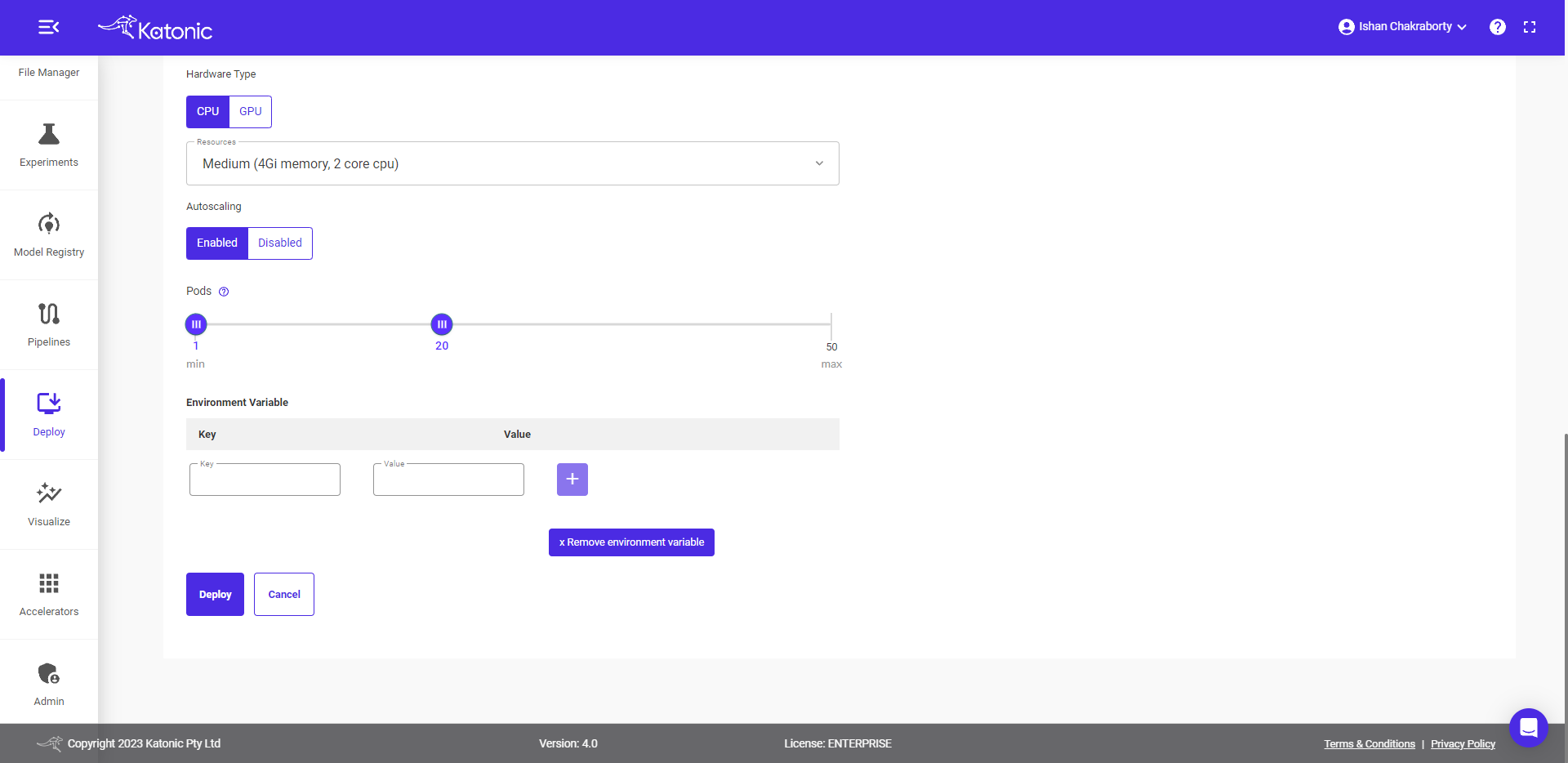

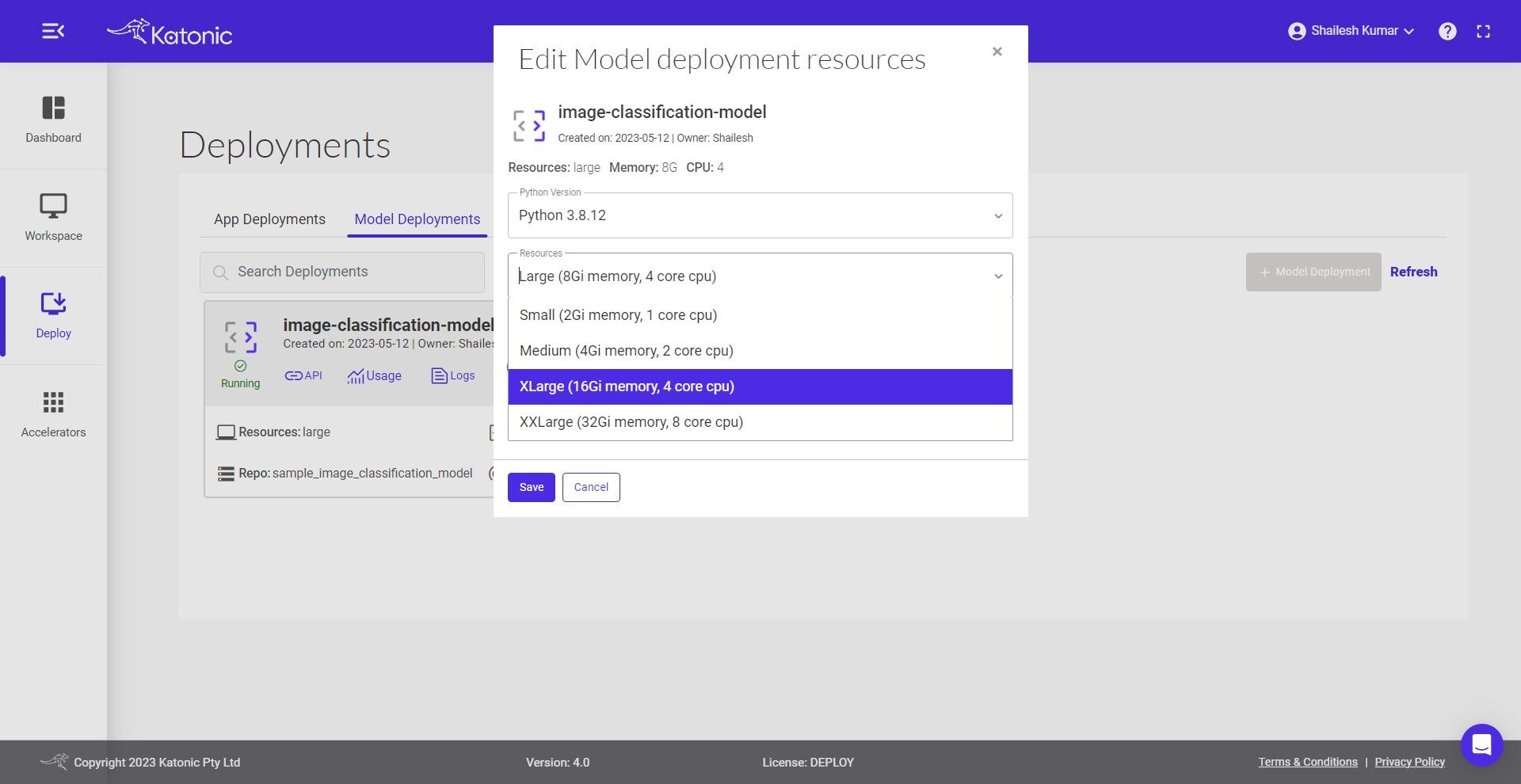

- Select Resources.

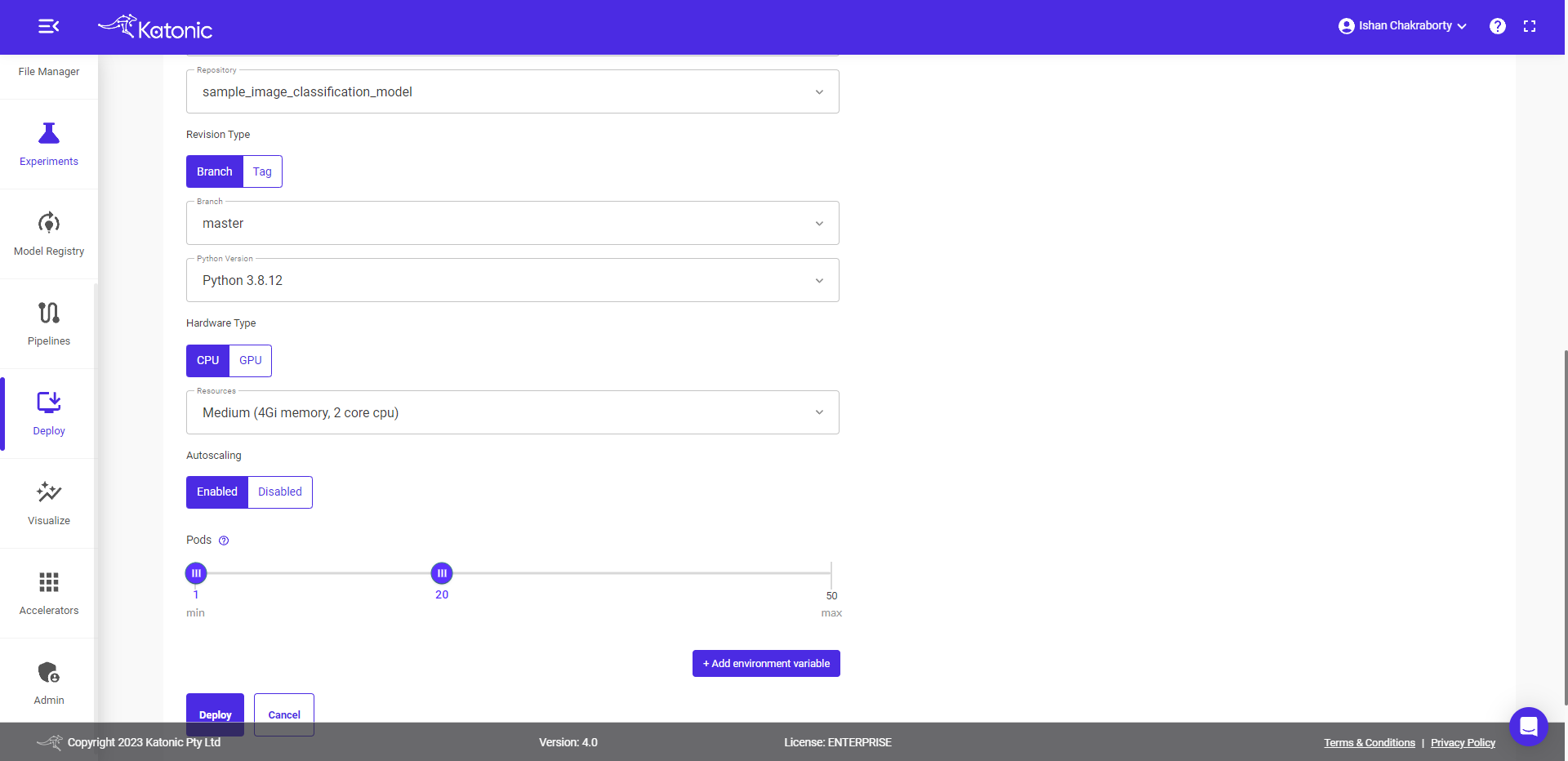

- Enable or Disable Autoscaling.

- Select Pods range, if the user enable Autoscaling.

- Click on Deploy.

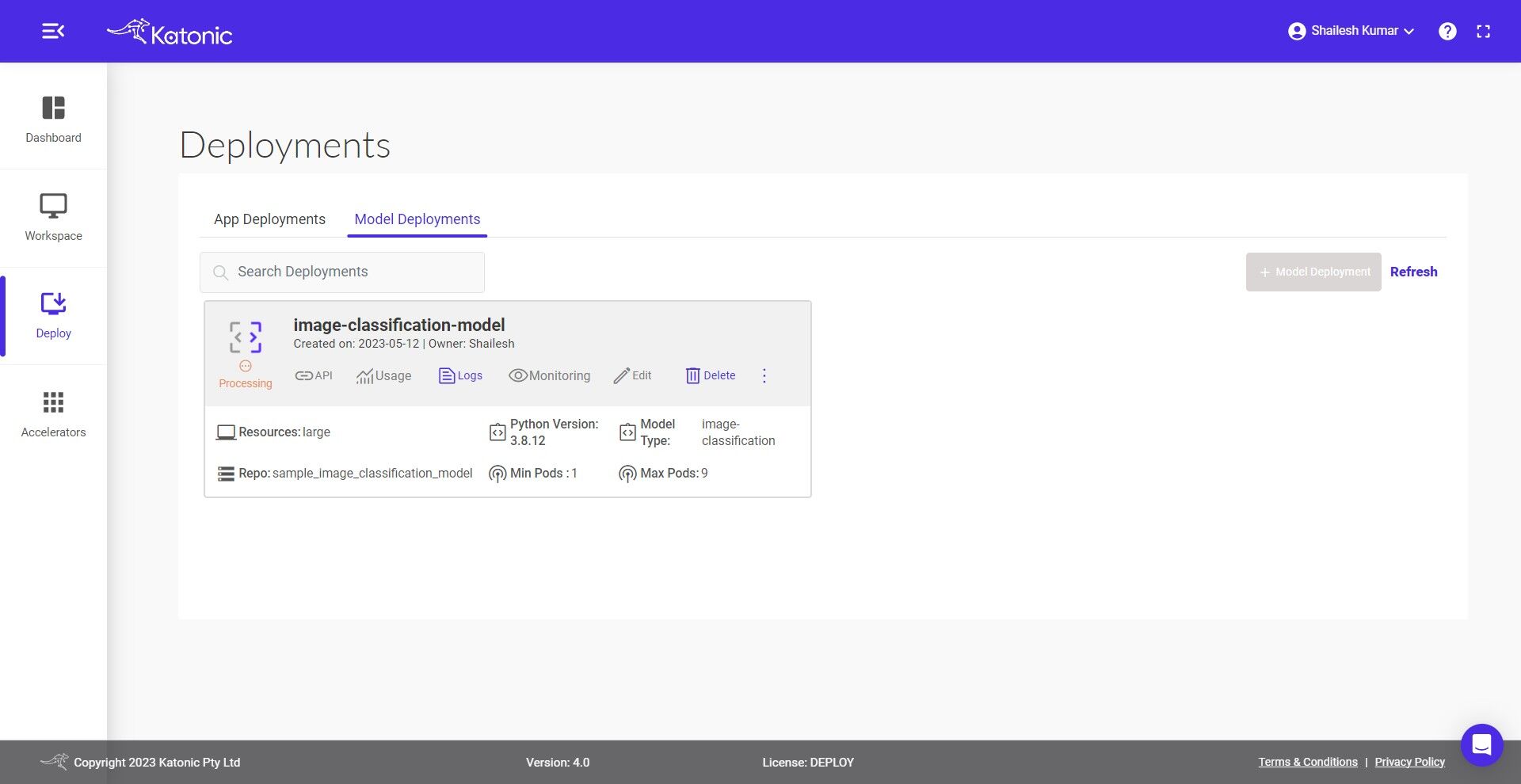

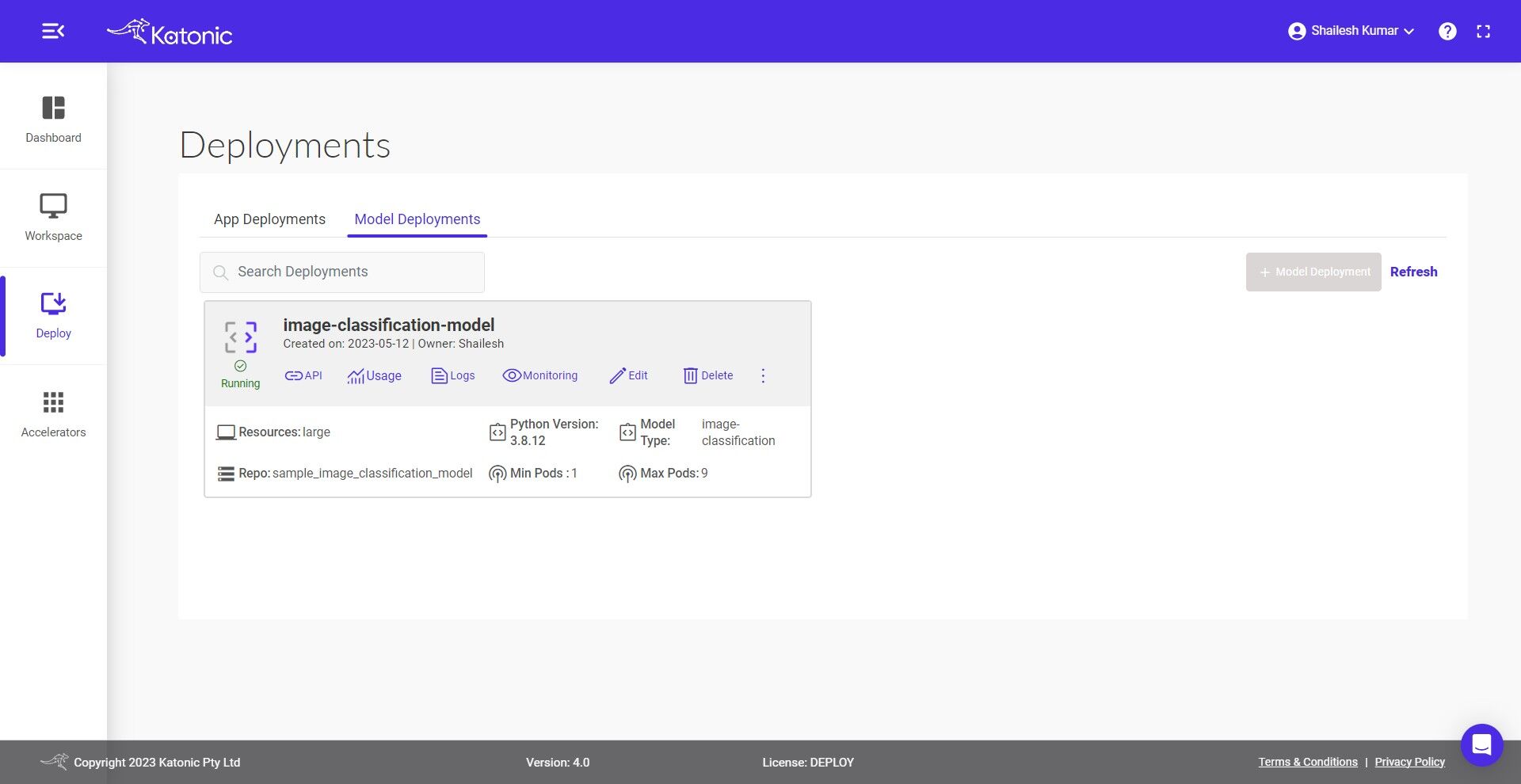

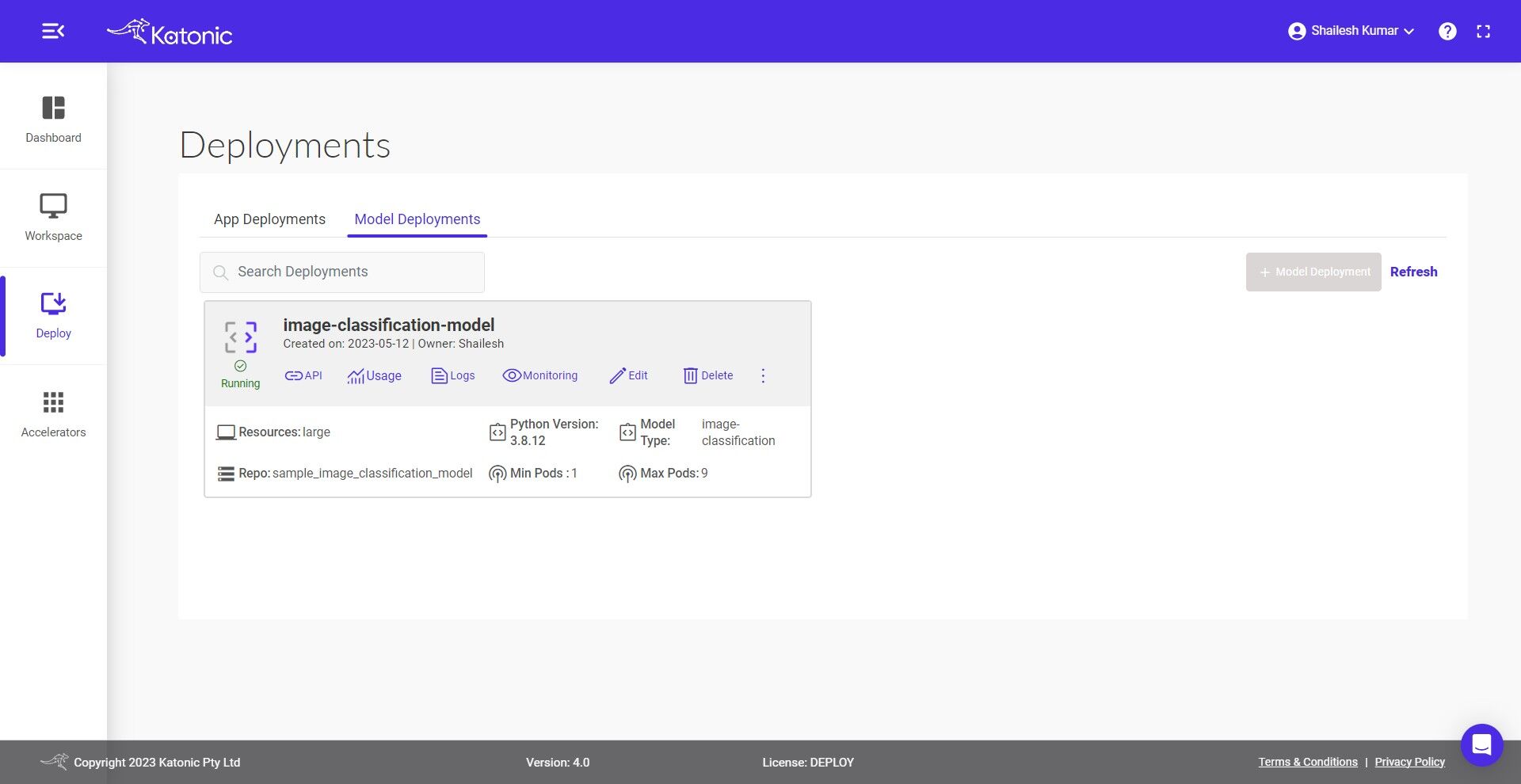

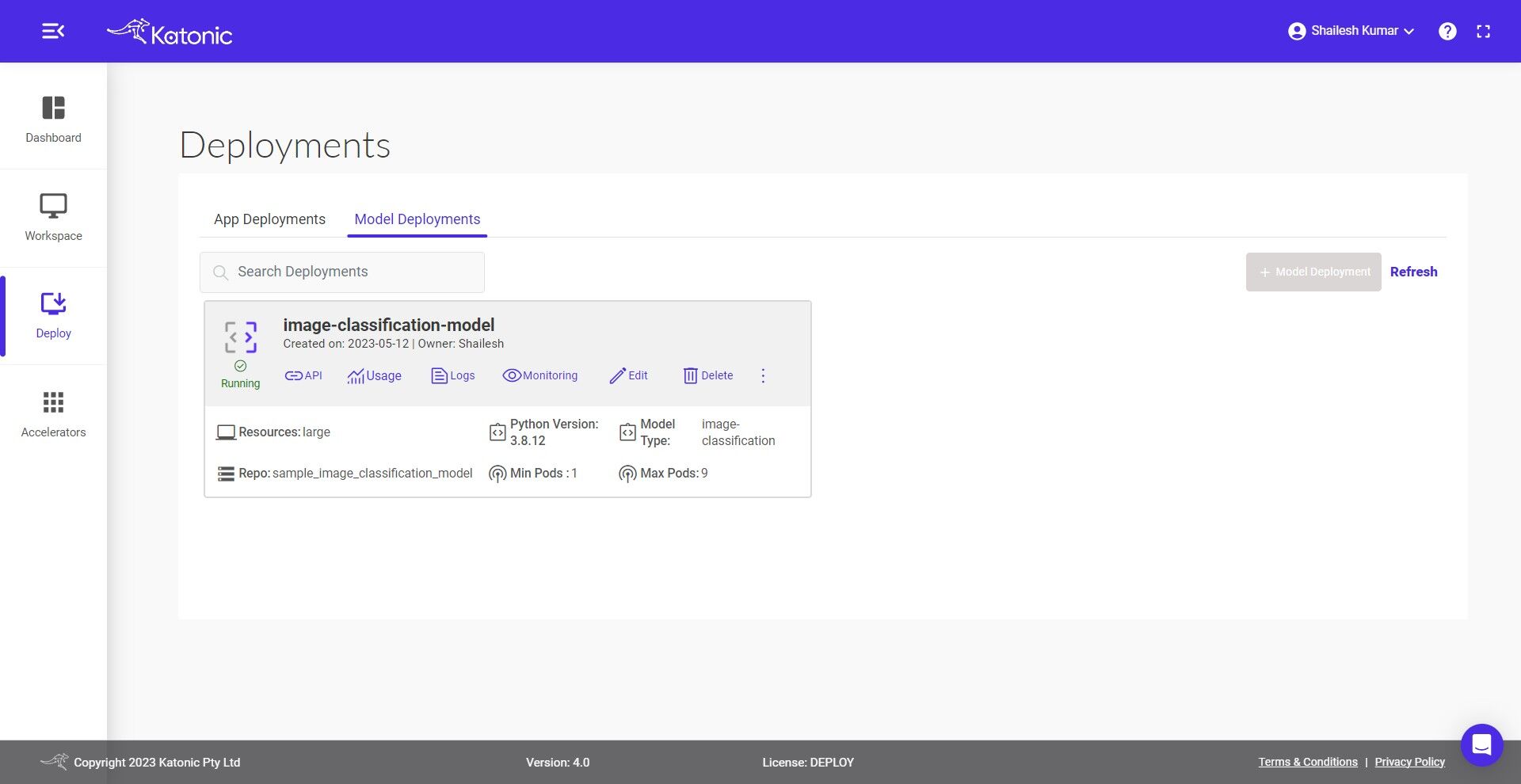

- Once your Custom Model API is created you will be able to view it in the Deploy section where it will be in "Processing" state in the beginning. Click on Refresh to update the status.

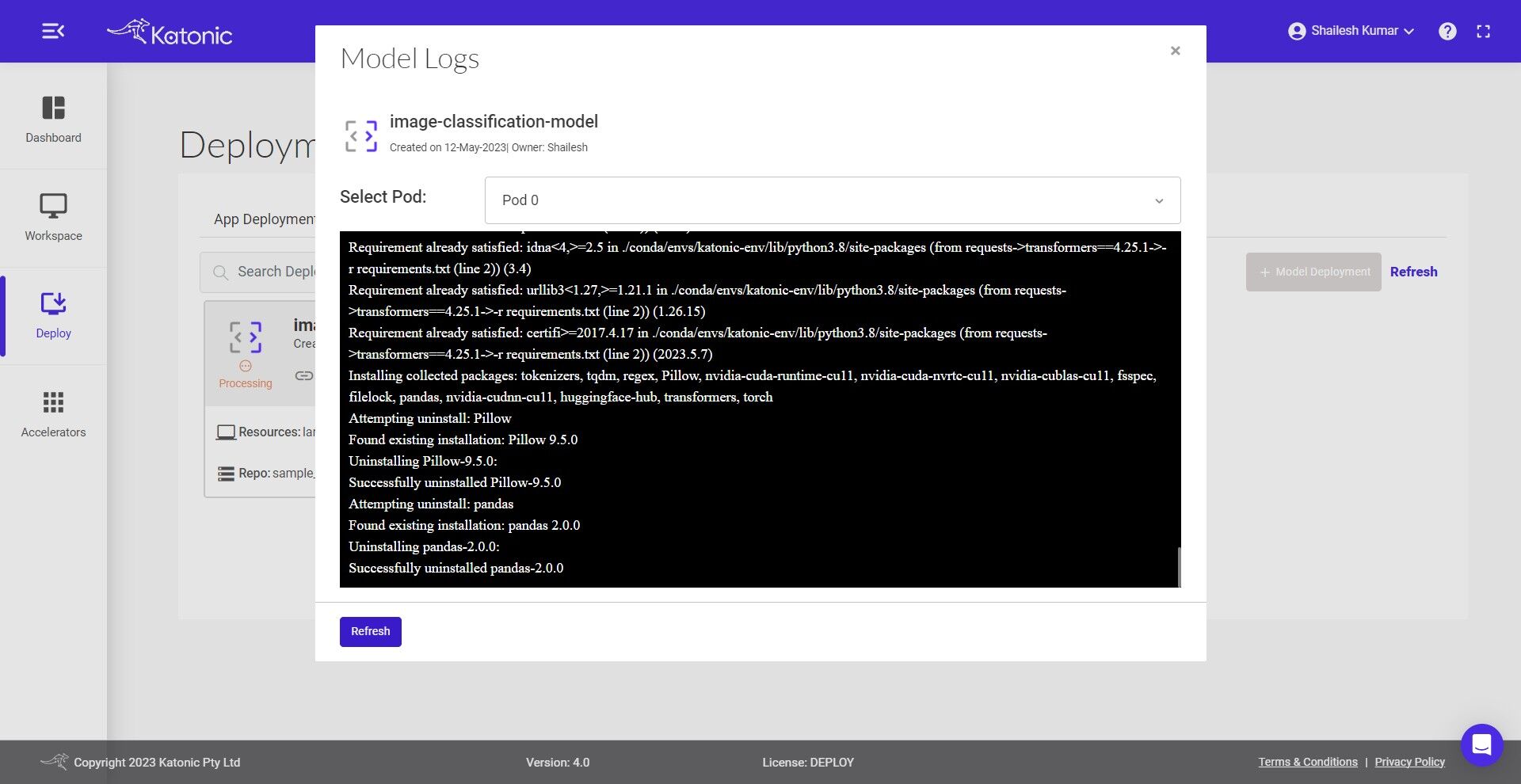

- You can also check out the logs to see the progress of the current deployment using Logs option.

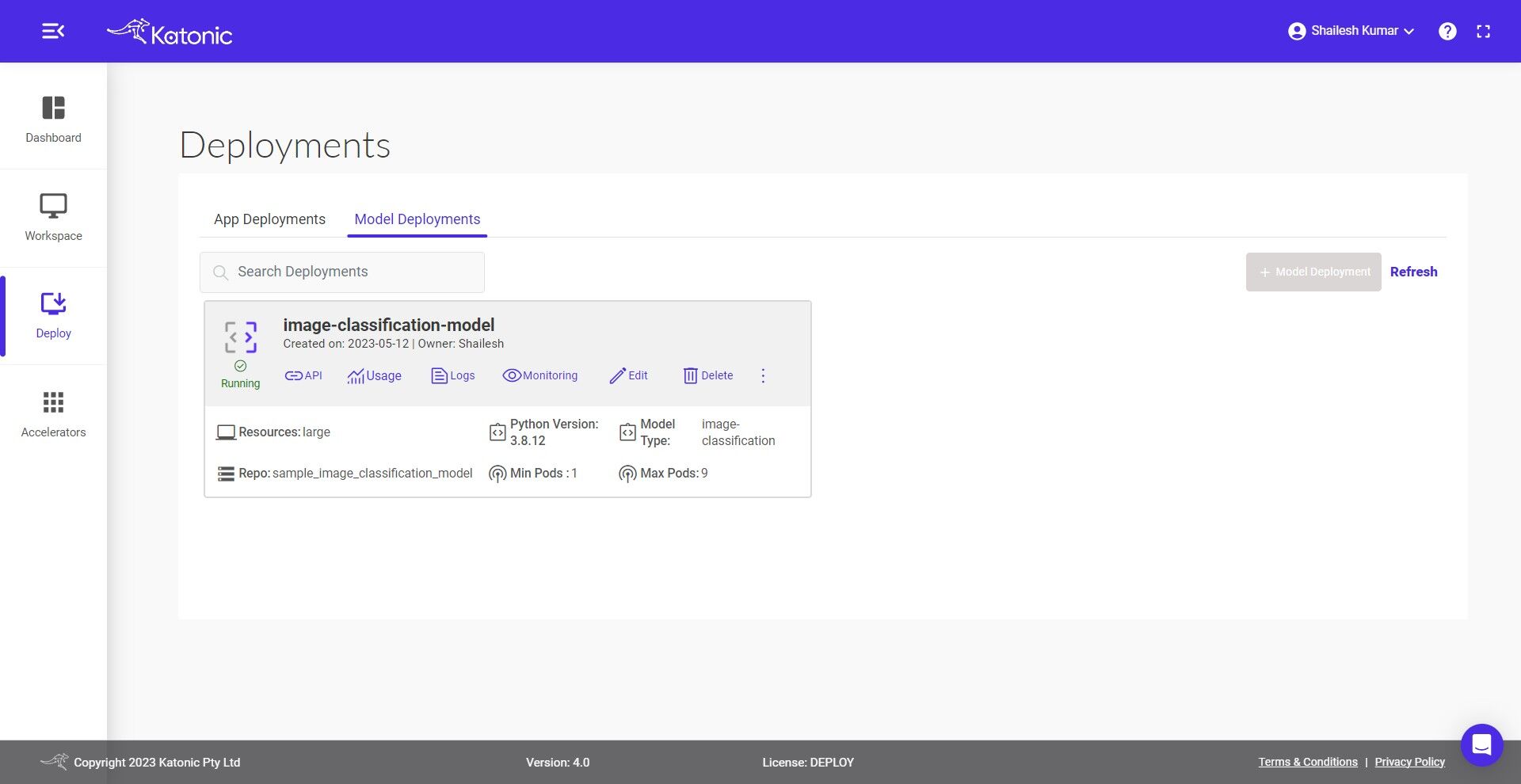

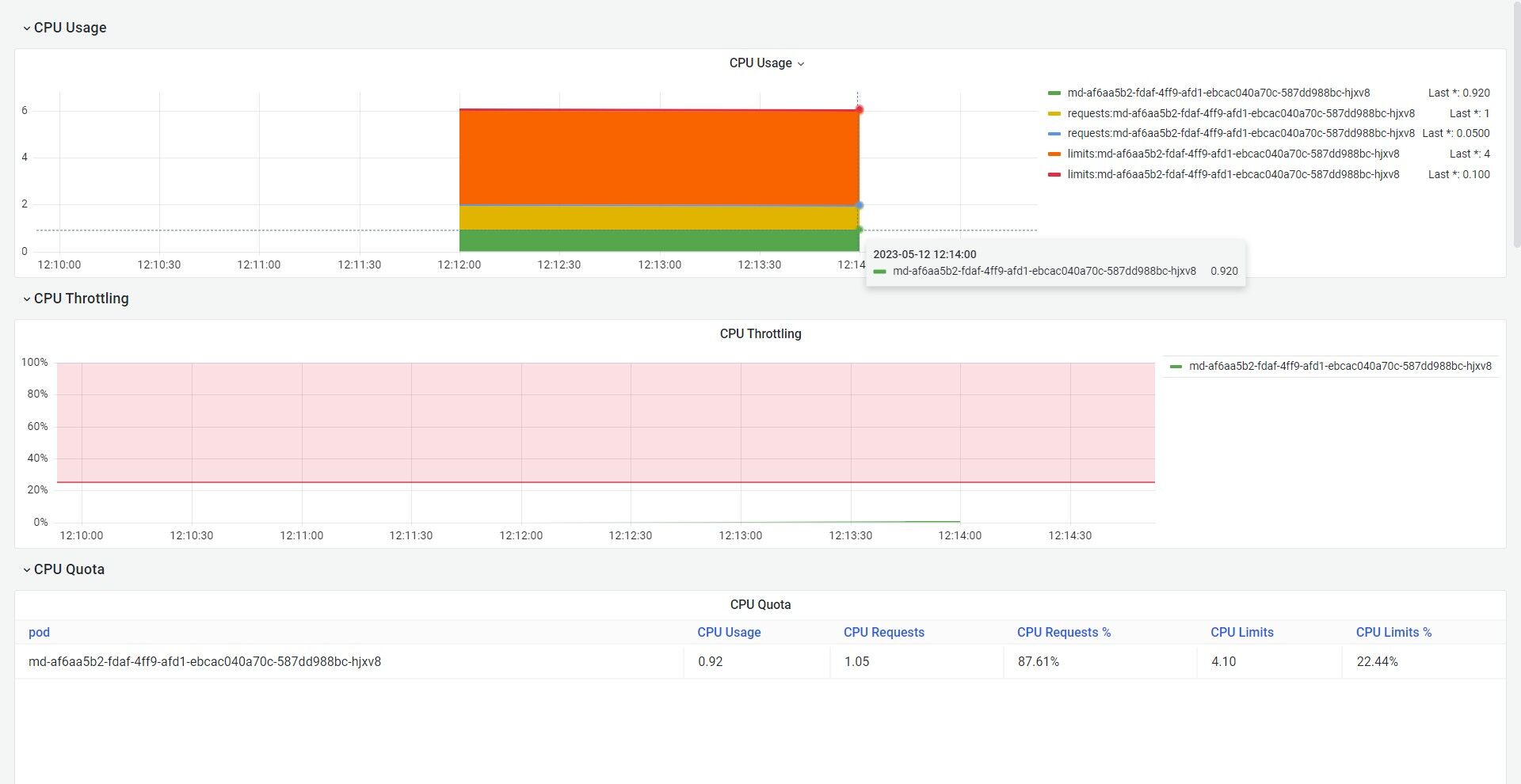

- Once your Model API is in the Running state you can check consumption of the hardware resources from Usage option.

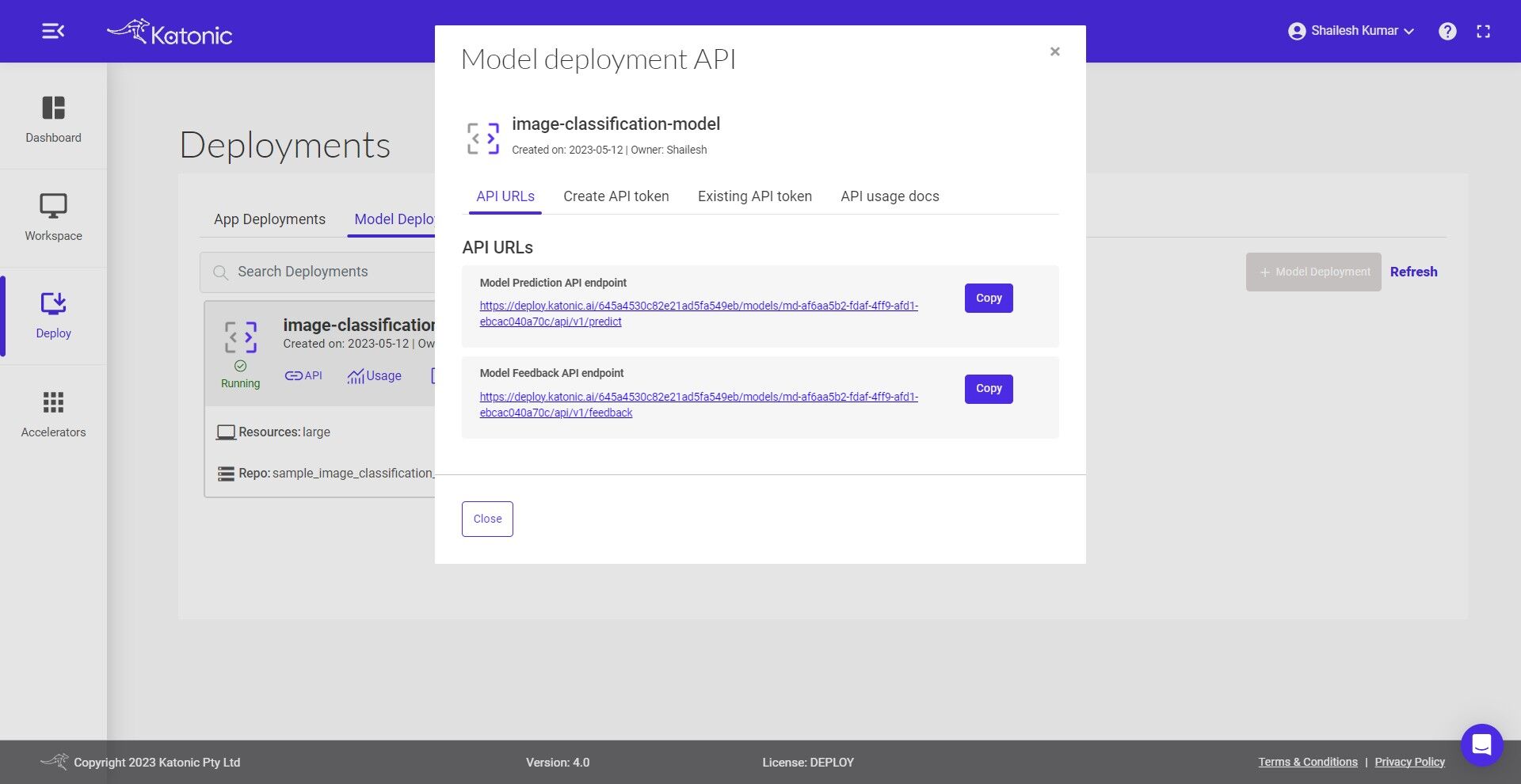

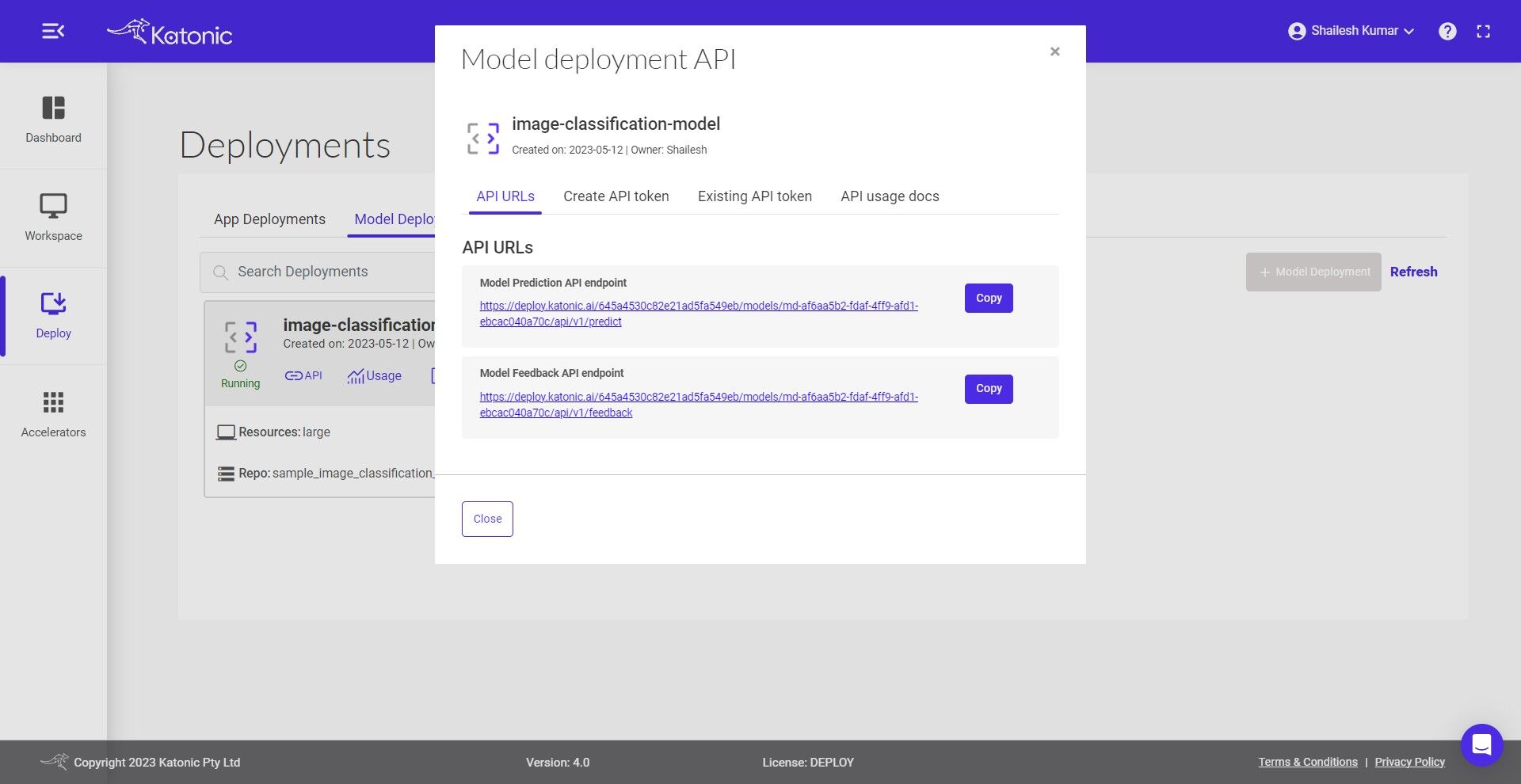

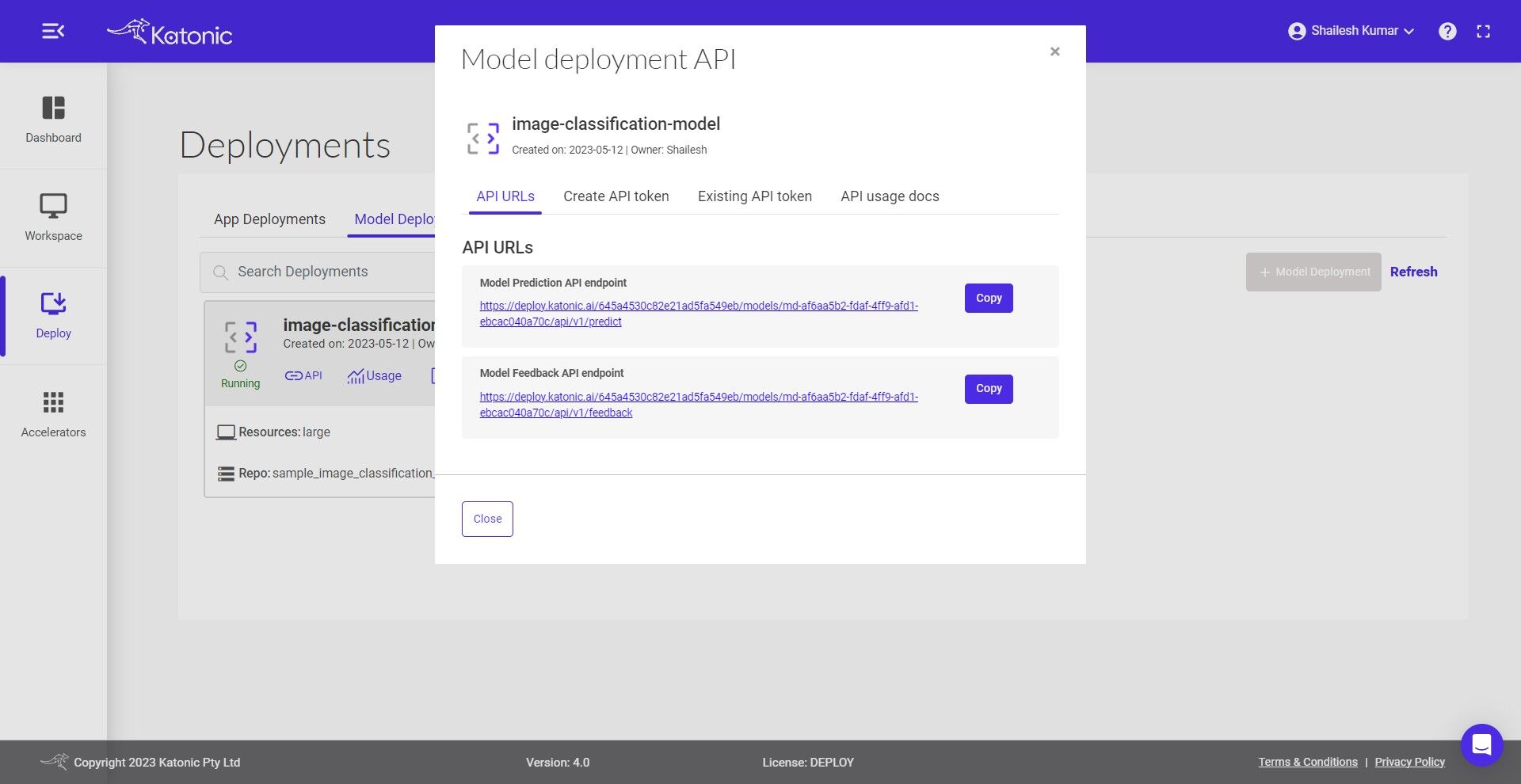

- You can access the API endpoints by clicking on API.

- There are two APIs under API URLs:

- Model Prediction API endpoint: This API is for generating the prediction from the deployed model Here is the code snippet to use the predict API:

MODEL_API_ENDPOINT = "Prediction API URL"

SECURE_TOKEN = "Token"

data = {"data": "Define the value format as per the schema file"}

result = requests.post(f"{MODEL_API_ENDPOINT}", json=data, verify=False, headers = {"Authorization": SECURE_TOKEN})

print(result.text)

- Model Feedback API endpoint: This API is for monitoring the model performance once you have the true labels available for the data. Here is the code snippet to use the feedback API. The predicted labels can be saved at the destination sources and once the true labels are available those can be passed to the feedback url to monitor the model continuously.

MODEL_FEEDBACK_ENDPOINT = "Feedback API URL"

SECURE_TOKEN = "Token"

true = "Pass the list of true labels"

pred = "Pass the list of predicted labels"

data = {"true_label": true, "predicted_label": pred}

result = requests.post(f"{MODEL_API_ENDPOINT}", json=data, verify=False, headers = {"Authorization": SECURE_TOKEN})

print(result.text)

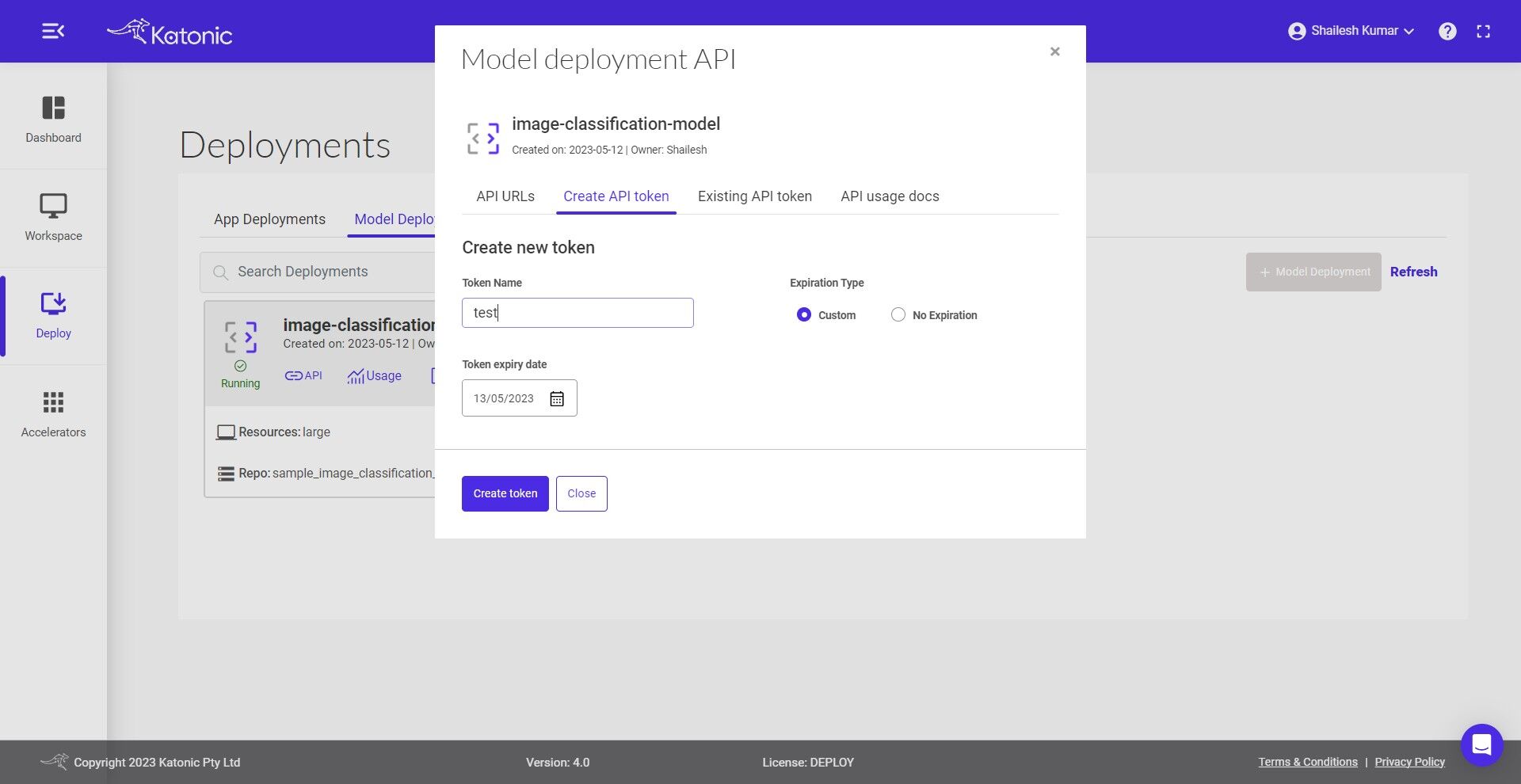

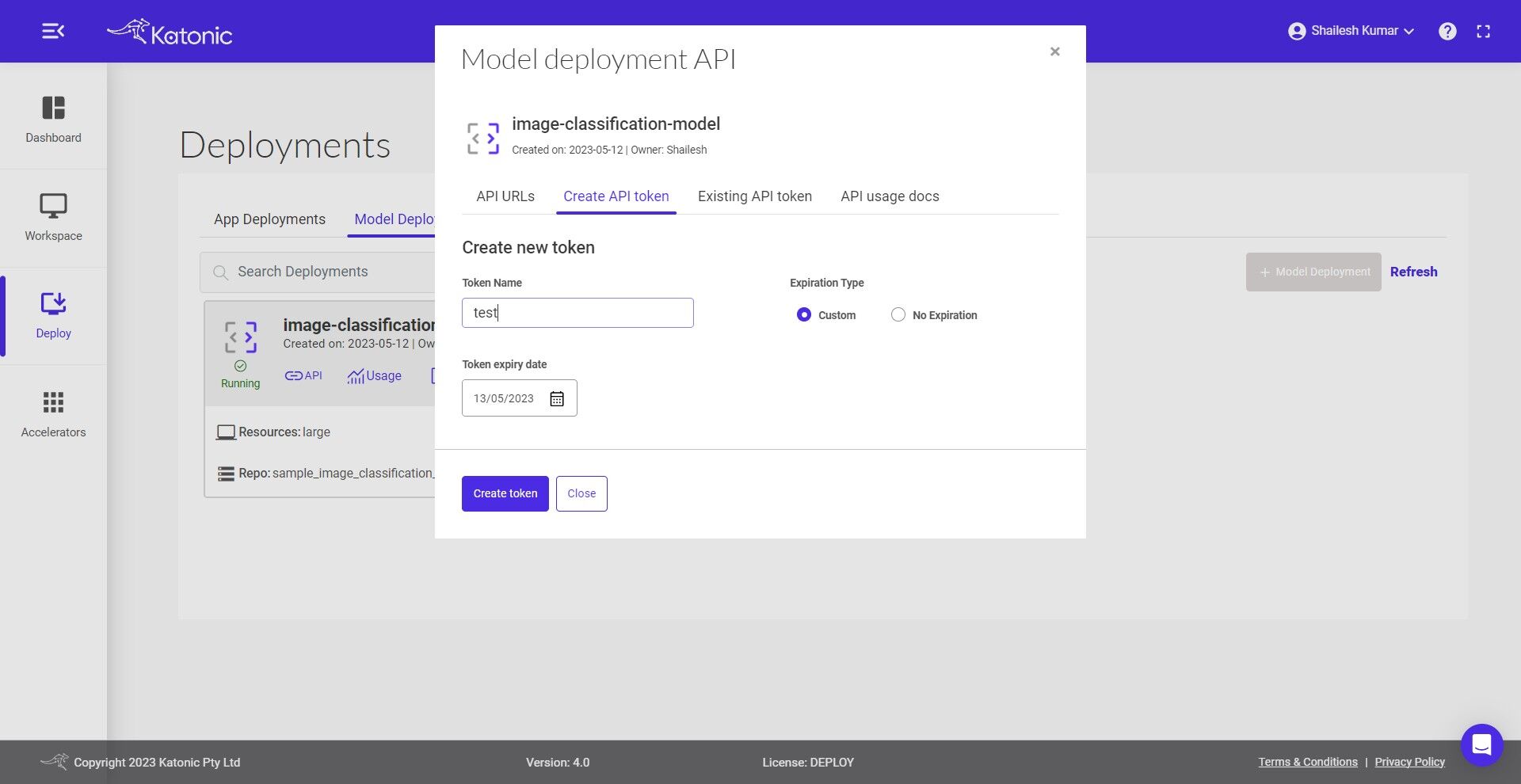

- Click on the Create API token to generate a new token in order to access the API

- Give a name to the token.

Select the Expiration Time

Set the Token Expiry Date

Click on Create Token and generate your API Token from the pop-up dialog box.

Note: A maximum of 10 tokens can be generated for a model.Copy the API Token that was created. As it is only available once, be sure to save it.

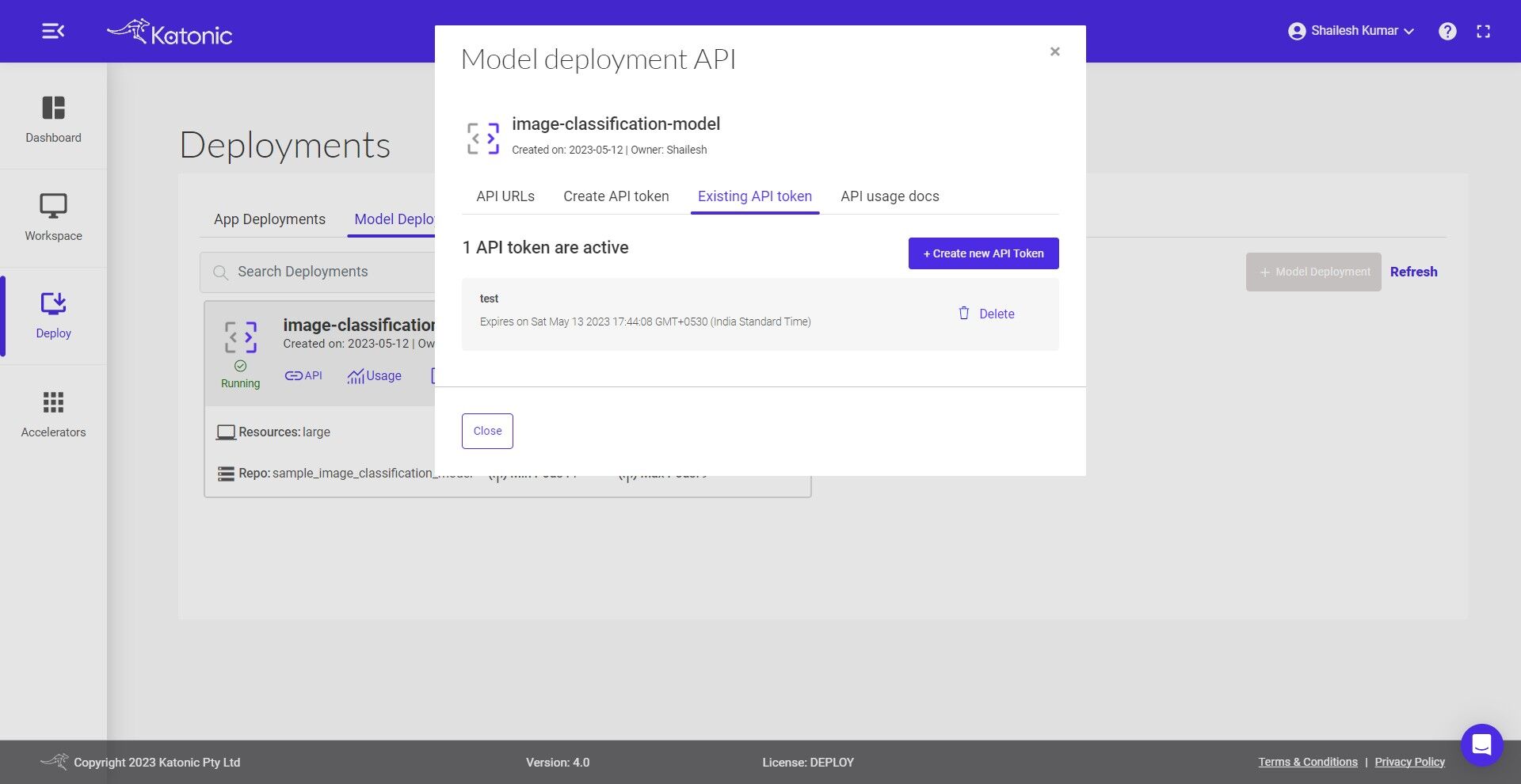

- Under the Existing API token section you can manage the generated token and can delete the no longer needed tokens.

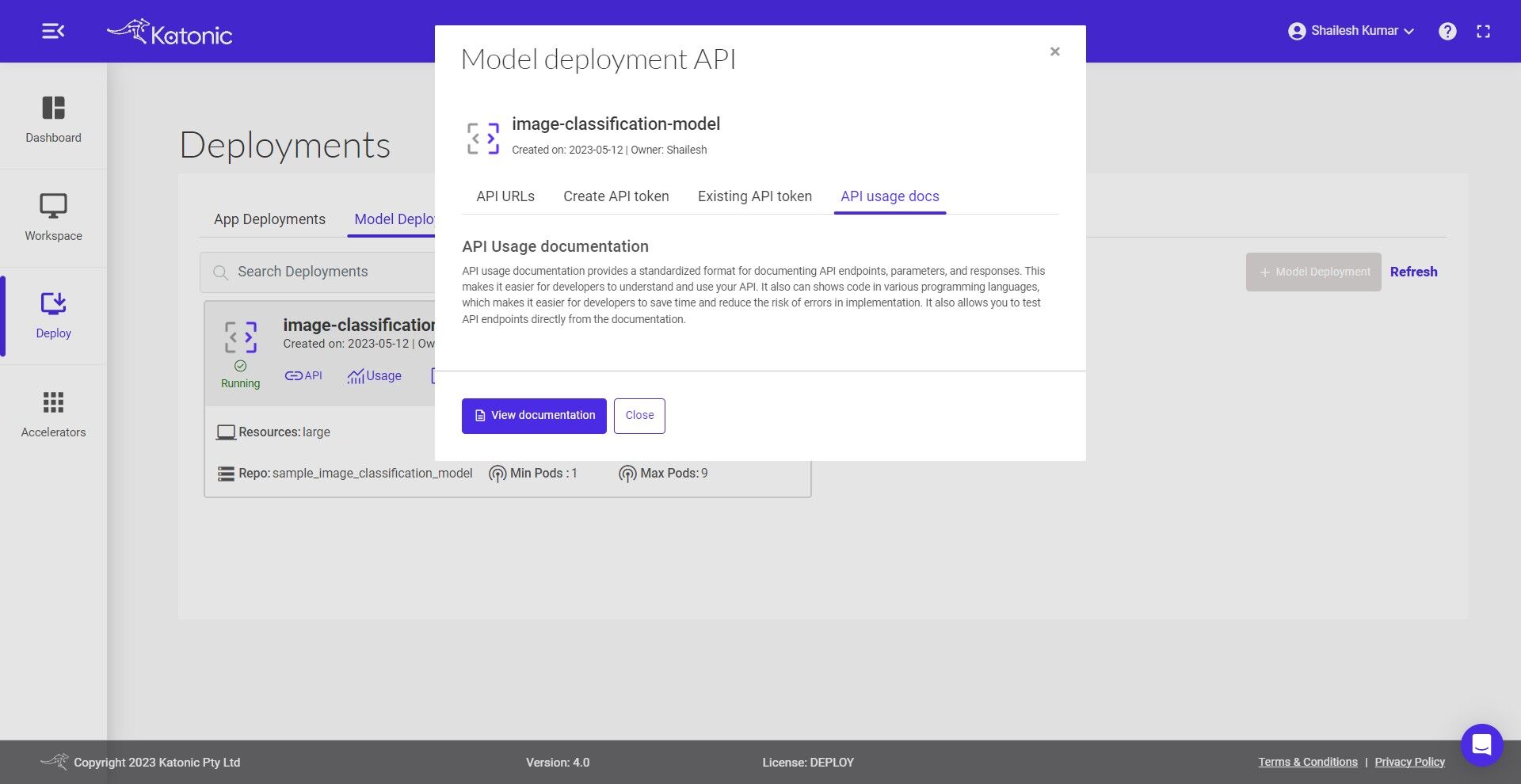

- API usage docs briefs you on how to use the APIs and even gives the flexibility to conduct API testing.

- To know more about the usage of generated API you can follow the below steps

- This is a guide on how to use the endpoint API. Here you can test the API with different inputs to check the working model.

- In order to test API you first need to Authorize yourself by adding the token as shown below. Click on Authorize and close the pop-up.

- Once it is authorise you can click on Predict_Endpoint bar and scroll down to Try it out.

- If you click on the Try it out button, the Request body panel will be available for editing. Put some input values for testing and the number of values/features in a record must be equal to the features you used while training the model.

- If you click on execute, you would be able to see the prediction results at the end. If there are any errors you can go back to the model card and check the error logs for further investigation.

- You can also modify the resources,version minimum and maximum pods of your deployed model by clicking the Edit option and saving the updated configuration.

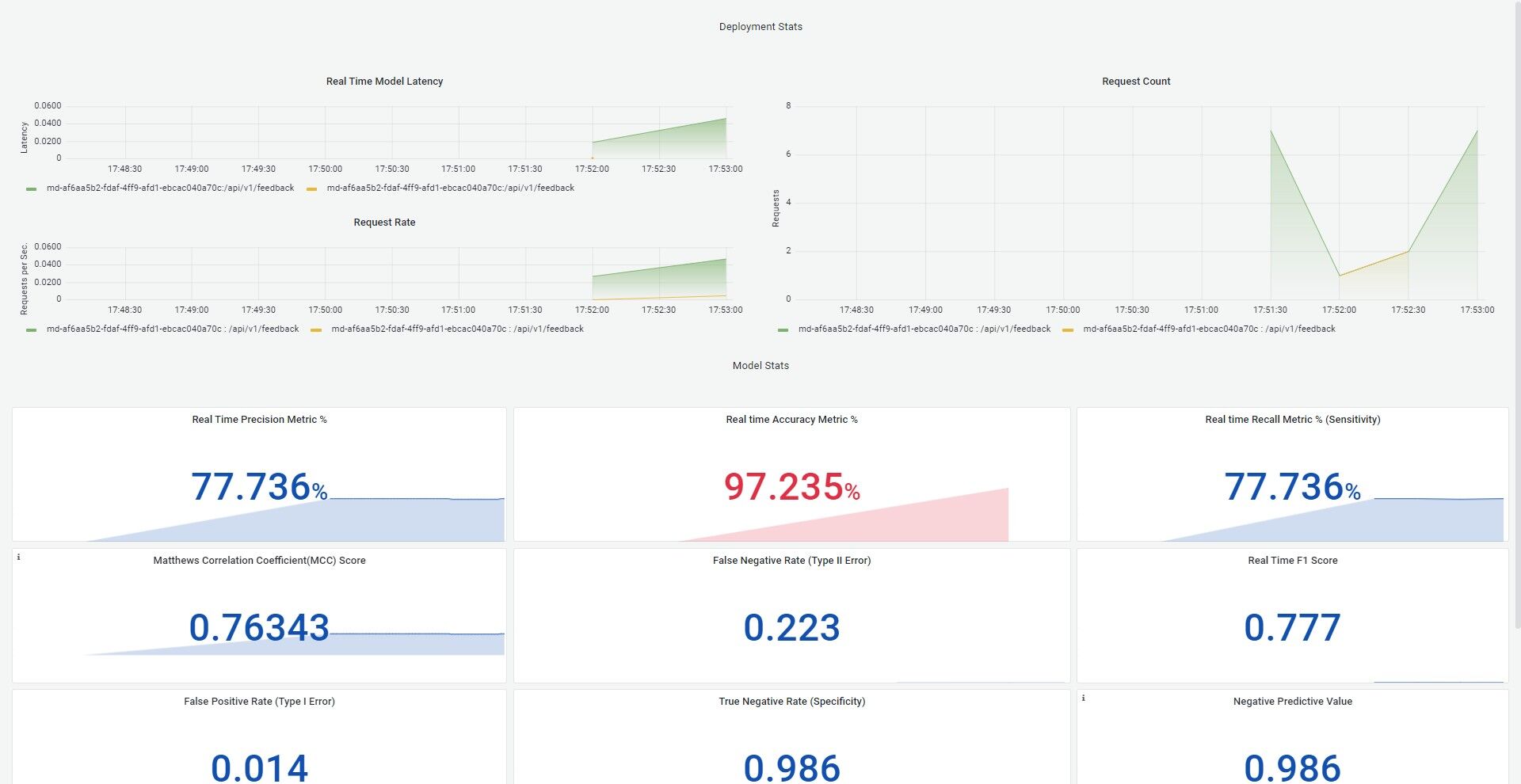

- Click on Monitoring, and a dashboard would open up in a new tab. This will help to monitor the effectiveness and efficiency of your deployed model. Refer the Model Monitoring section in the Documentation to know more about the metrics that are been monitored.

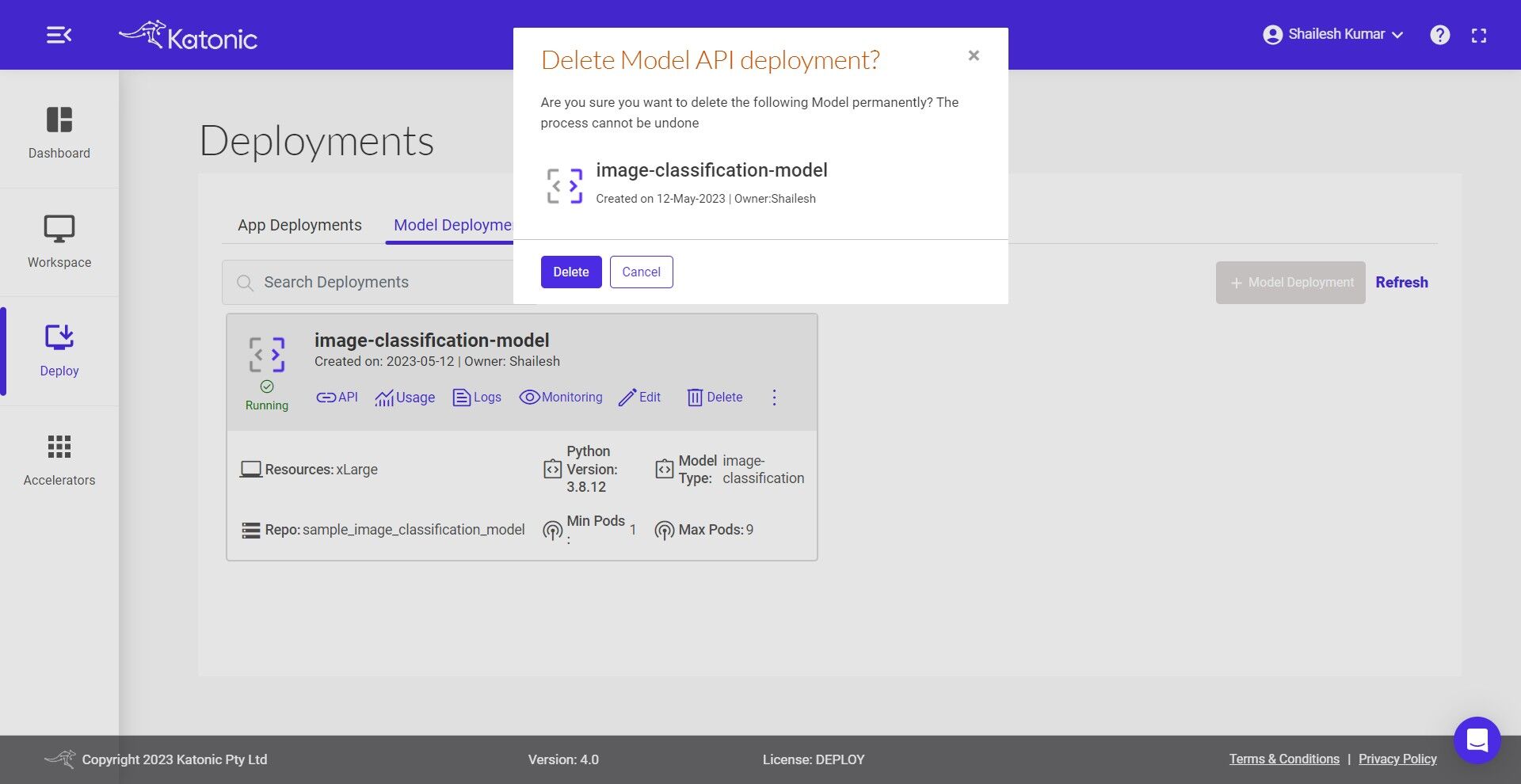

- To delete the unused models use the Delete button.