Notebook conversion to Pipeline.

Before Deploying a model to the production environment, we will create a pipeline by conerting the notebook. For that we'll use Katonic's Pipeline deployment panel.

These pipeline will automate all the phases that you've done till training the model. Also we can train the model using the pipeline itself.

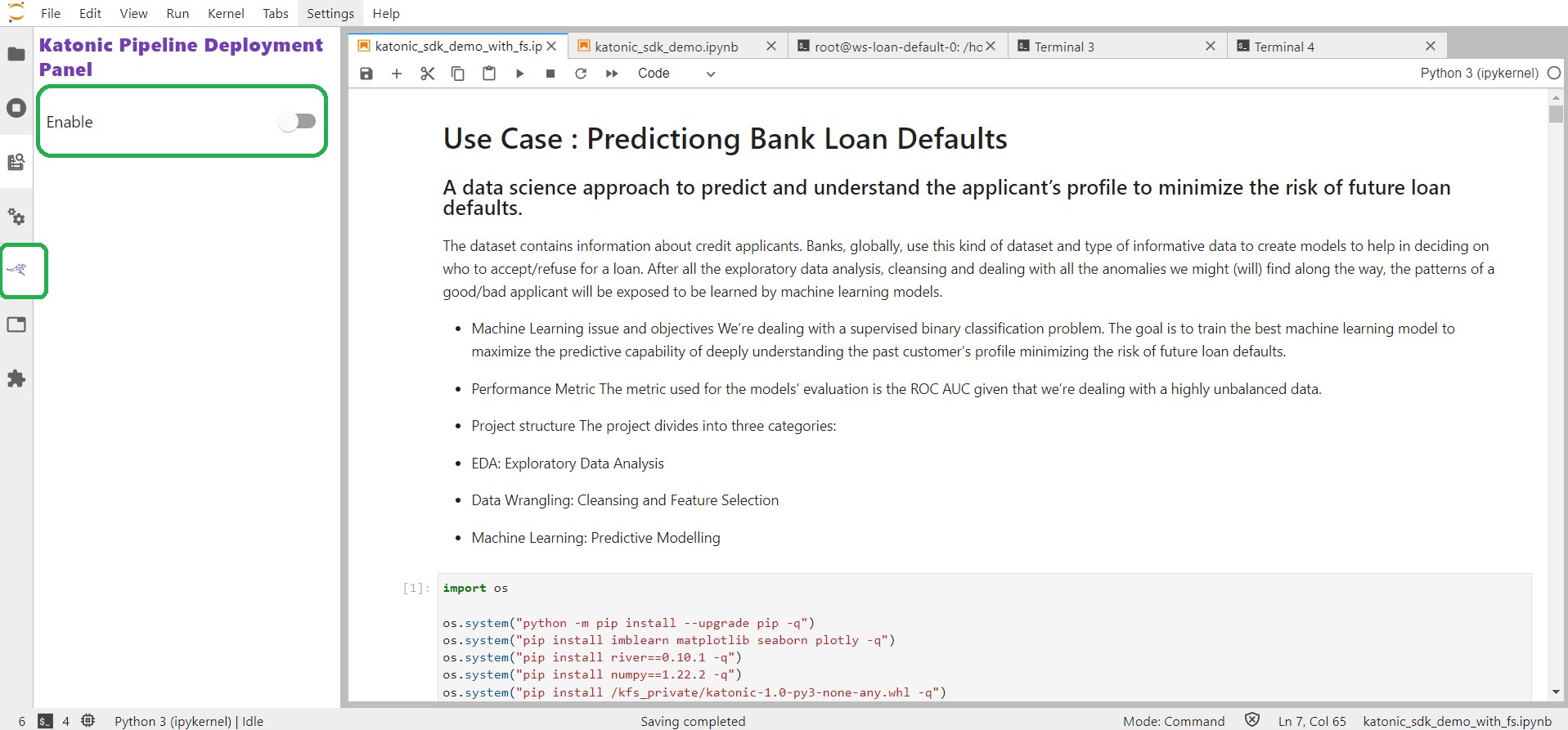

So just go to the Notebook and enable the Katonic Pipeline Deployment Panel. You can find this option in the left sidebar of your notebook.

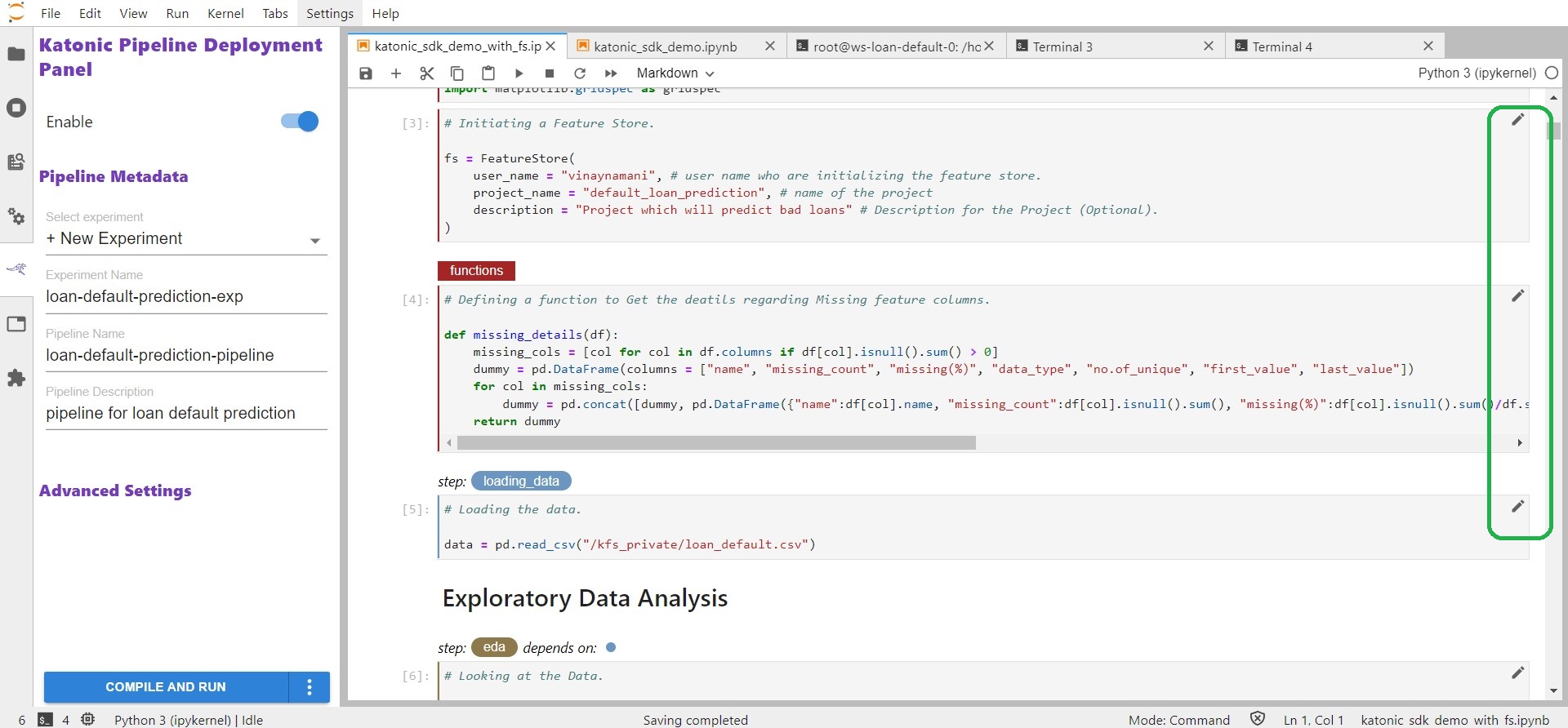

Once you enable the Deployment Panel. You can see that every cell in the notebook will have an edit option(pencil-marker) to tag the cell based on the functionality it will do. If it has any dependency on the other cell we need to define the dependency also.

Available Cell Types :

| Cell type | Cell should contain |

|---|---|

| Imports | Blocks of code that import other modules your machine learning pipeline requires and may be needed by more than one step. |

| Functions | Functions used later in your machine learning pipeline; global variable definitions (other than pipeline parameters); and code that initializes lists,dictionaries, objects, and other values used throughout your pipeline. |

| Pipeline Parameters | Definitions for global variables used to parameterize your machine learning workflow. These are often training hyperparameters. |

| Pipeline Metrics | Lines of code that log or print values used to measure the success of your model. |

| Pipeline Step | Code that implements the core logic of a discrete step in your workflow. |

| Skip Cell | Any code that you want to ignore. |

Once you tag all the Cell along with their Dependencies, you can compile and run the pipeline.

In order to run the pipeline you need to define the configuration.