Model Monitoring.

Now we have deployed our model and making predictions with that, we can even use it in the Web Application.

To keep track of the model performance, we need a monitoring facility to see how the model is working with the given data and is there any degradation or deterioration so that we can re-train the model using the Pipeline and deploy the next best model to the Production. In this way we can automate the Life Cycle of a Model.

To Monitor the model, follow the below steps:

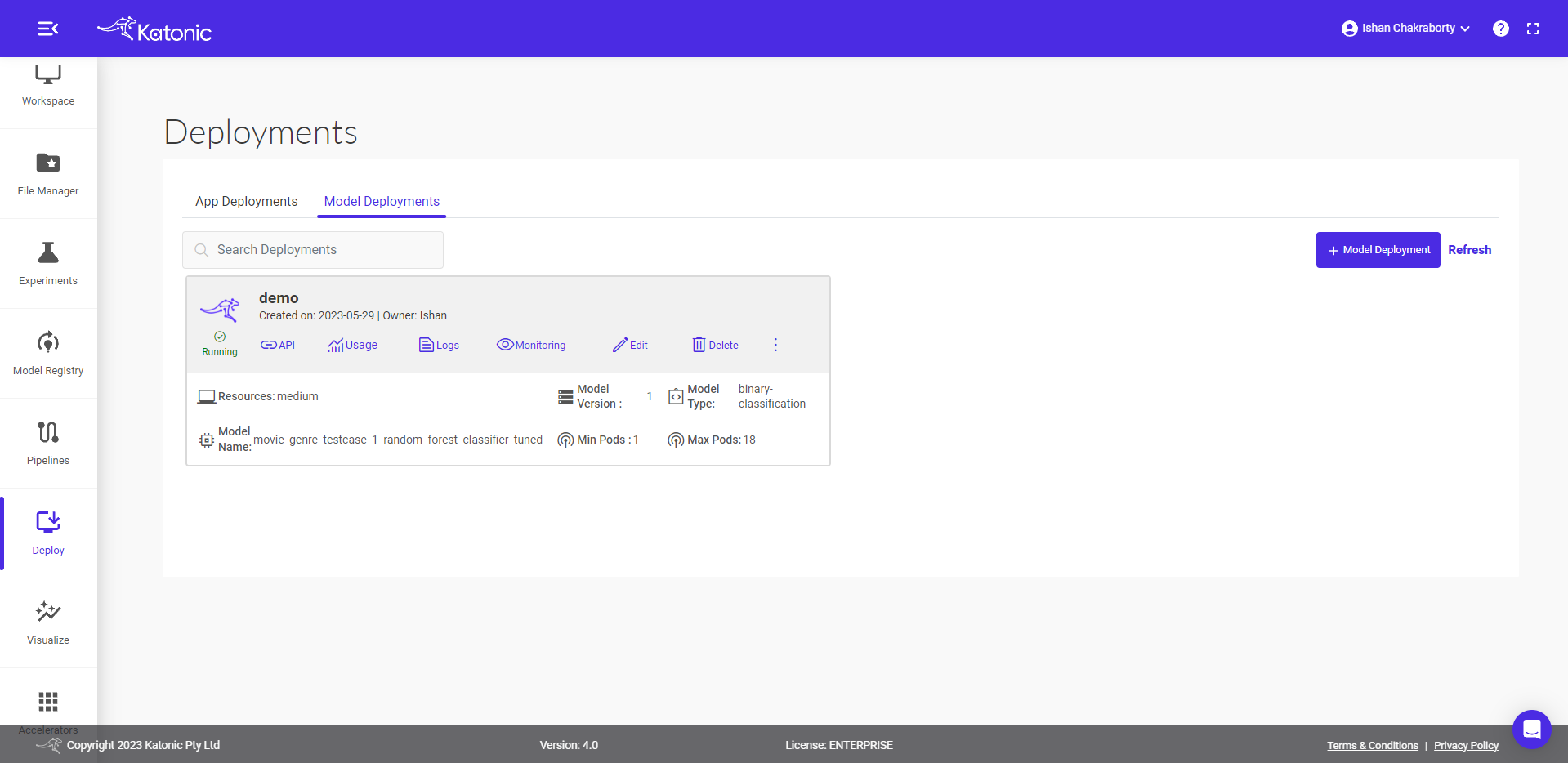

Go to the

Deploymentsection from the Katonic Platform sidebar.Find the model that you deployed.

Click on the

MonitoringIcon to view your model performance and all the metrics regarding the Inference Data.

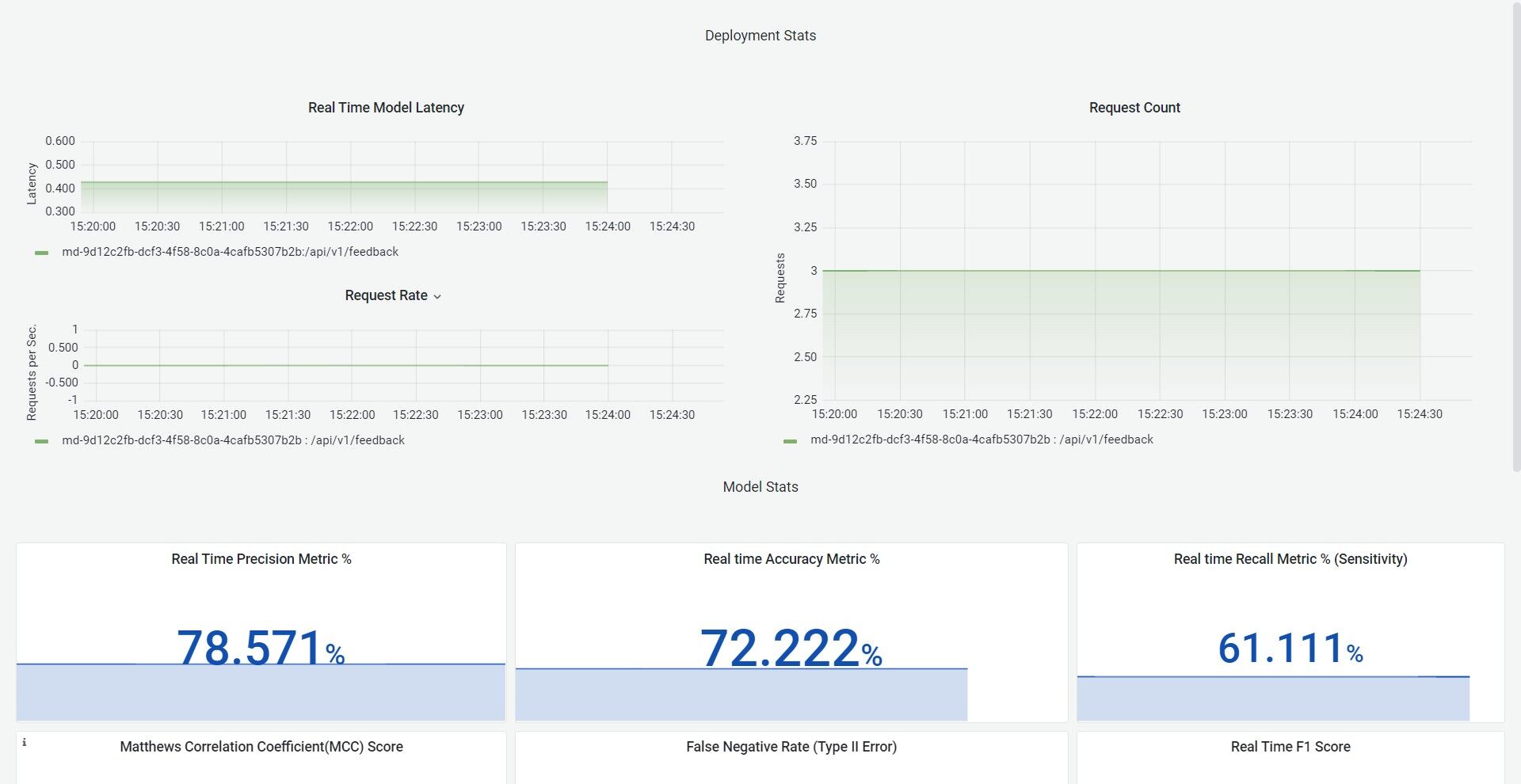

Once you click on the Monitoring option, a new window will be opened and it consists of all the Monitoring options. It consists of the following metrics and Performance indicators.

Memory Usage

CPU Usage

Deployment Statistics

- Real-Time Model Latency

- Request Count

Model Statistics

- Real-Time Precision Metric

- Real-Time Recall Metric

- Real-Time Accuracy Metric

- Real-Time Outlier Score

- Real-Time F-1 Score

- Other Metrics Covering the True Positive, True Negative, False Positive and False Negative statistics

Model Monitoring Dashboard

You will be able to analyze the model performance from the dashboard. If you feel that the model is getting degraded, you can simply run the pipeline in a single click and bring back a new model that would have been trained using new data or more data.